Story

Even though most of us consider water contamination as a gradual occurrence, especially for thriving landscapes, impending pollution can pervade water bodies instantaneously. In the case of enclosed water bodies such as closed lakes, pernicious contaminants can arise in a week and threaten aquatic environments despite not manifesting any indications. These furtively spreading pollutants can even impinge on the health of terrestrial animals by not only poisoning precious water sources but also withering aquatic plants.

In most cases, conducting only surface-level water quality tests is insufficient to pinpoint the hiding pollutants since contaminants can form in the underwater substrate by the accumulation of the incipient chemical agents. These underwater chemical reactions are commonly instigated by detritus, industrial effluents, and toxic sediment rife in the underwater substrate. After the culmination of the sinking debris, these reactions can engender algal blooms, hypoxia (dead zones), and expanding barren lands[1]. Since the mentioned occurrences are only the result of prolonged water pollution, they lead to the inexorable progress of complex toxic chemical interactions, even with plastic debris[2]. Therefore, the precedence must be given to identifying the underlying conditions of increasing underwater chemical reactions.

Especially in lower substrate levels, before reaching hazardous amounts, the combined chemical reactions between pollutants yield ample gas molecules enough to accumulate small-to-moderate air bubbles underwater. These lurking gas pockets affect aquatic plant root systems, deliver noxious contaminants to the surface level, and alter water quality unpredictably due to prevalent emerging contaminants. As a result of the surge of toxic air gaps, the affected water body can undergo sporadic aquatic life declines, starting with invertebrate and fry (or hatchling) deaths. Although not all instances of underwater air bubble activity can be singled out as an imminent toxic pollution risk, they can be utilized as a vital indicator to test water quality to preclude any potential environmental hazards.

In addition to protecting natural enclosed water bodies, detecting the accumulating underwater pollutants can also be beneficial and profitable for commercial aquatic animal breeding or plant harvesting, widely known as aquaculture. Since aquaculture requires the controlled cultivation of aquatic organisms in artificially enclosed water bodies (freshwater or brackish water), such as fish ponds and aquariums, the inflation of underwater air bubbles transferring noxious pollutants to the surface can engender sudden animal deaths, wilting aquatic plants, and devastating financial losses. Especially for fish farming or pisciculture involving more demanding species, the accumulating air bubbles in the underwater substrate can initiate a chain reaction resulting in the loss of all fish acclimatized to the enclosed water body. In severe cases, this can lead to algae-clad artificial environments threatening terrestrial animals and the incessant decline in survival rates.

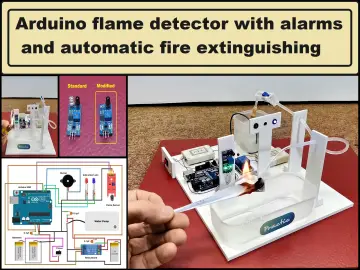

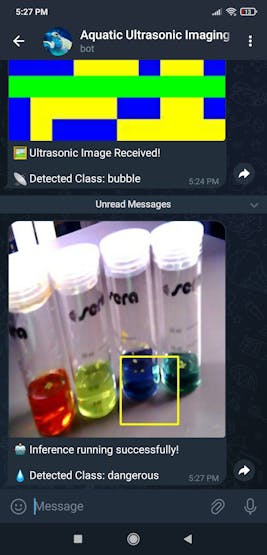

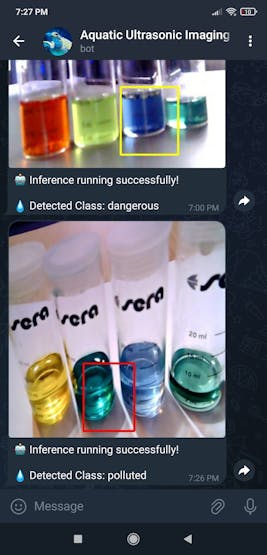

After perusing recent research papers on identifying air bubbles in the underwater substrate, I noticed that there are no practical applications focusing on detecting underwater air bubbles and assessing water pollution consecutively so as to diagnose potential toxic contaminants before instigating detrimental effects on the natural environment or a commercial fish farm. Therefore, I decided to develop a feature-rich AIoT device to identify underwater air bubbles via a neural network model by applying ultrasonic imaging as a nondestructive inspection method and to assess water pollution consecutively based on multiple chemical water quality tests via an object detection model. In addition to AI-powered functions, I also decided to build capable user interfaces and a push notification service via Telegram.

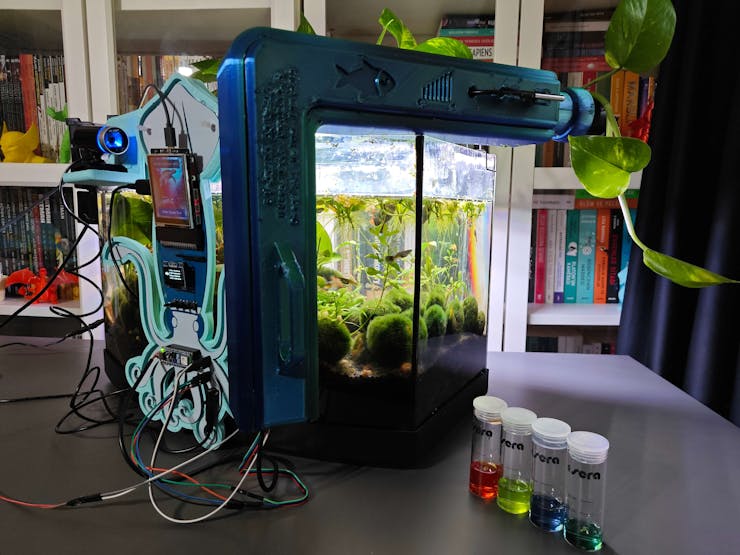

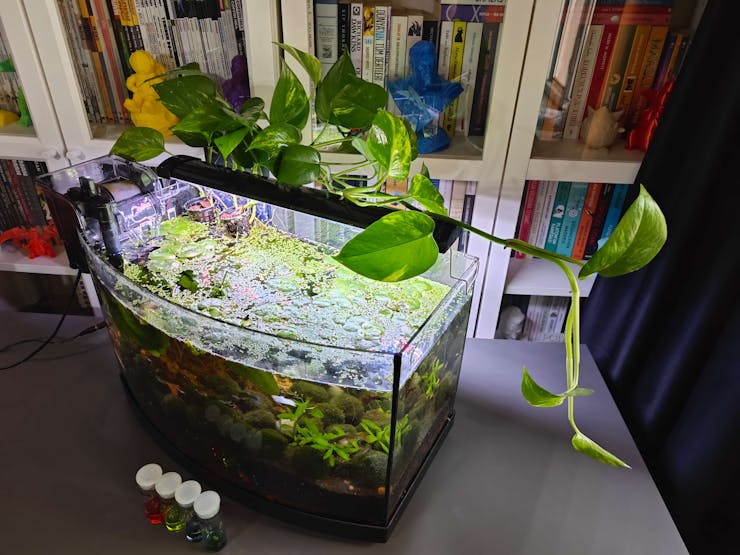

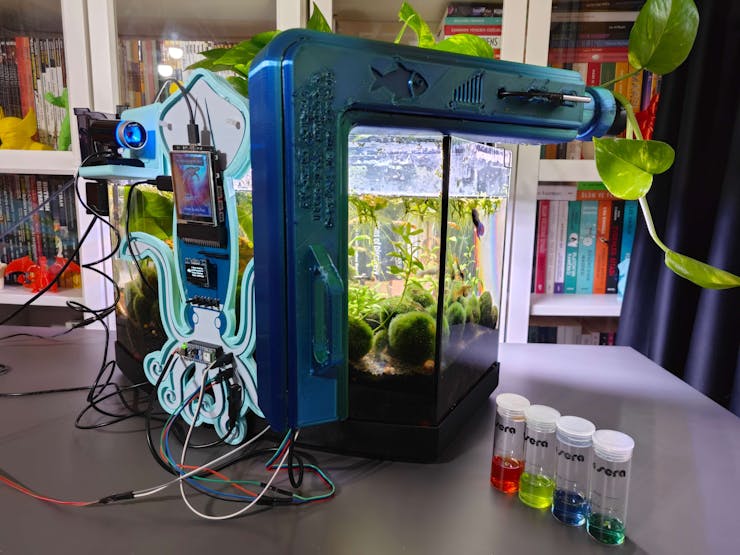

Before working on data collection procedures and model training, I thoroughly searched for a natural or artificial environment demonstrating the ebb and flow of underwater substrate toxicity due to overpopulation and decaying detritus. Nevertheless, I could not find a suitable option near my hometown because of endangered aquatic life, unrelenting habitat destruction, and disposal of chemical waste mostly caused by human-led activities. Thus, I decided to set up an artificial aquatic environment simulating noxious air bubbles in the underwater substrate and potential water pollution risk. After conducting a meticulous analysis of fecund aquatic life with which I can replicate fish farm conditions in a medium-sized aquarium, I decided to set up a planted freshwater aquarium for harmonious and proliferating species — livebearers (guppies), Neocaridina shrimp, dwarf (or least) crayfish (Cambarellus Diminutus), etc. In the following steps, I shall explain all species in my controlled environment (aquarium) with detailed instructions.

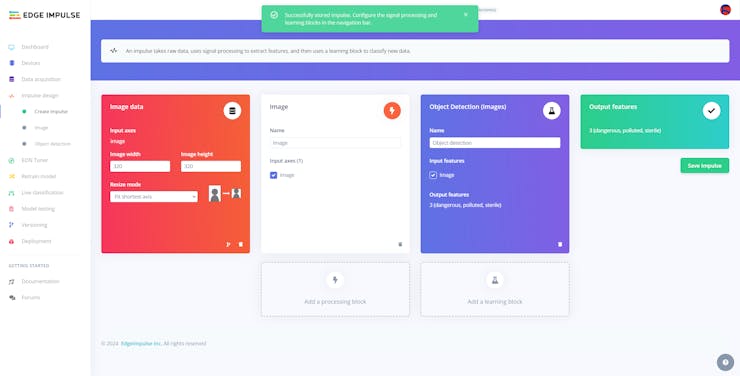

Since the crux of identifying underwater air bubbles and assessing water pollution simultaneously requires developing an AI-driven device supporting multiple machine learning models, I decided to construct two different data sets — ultrasonic scan data (buffer) and chemical water quality test result (color-coded) images, build two different machine learning models — neural network and object detection, and run the trained models on separate development boards. In this regard, I was able to program distinct and feature-rich user interfaces for each development board, focusing on a different aspect of the complex AI-based detection process, and thus avoid memory allocation issues, latency, reduced model accuracy, and intricate data collection methods due to multi-sensor conflict.

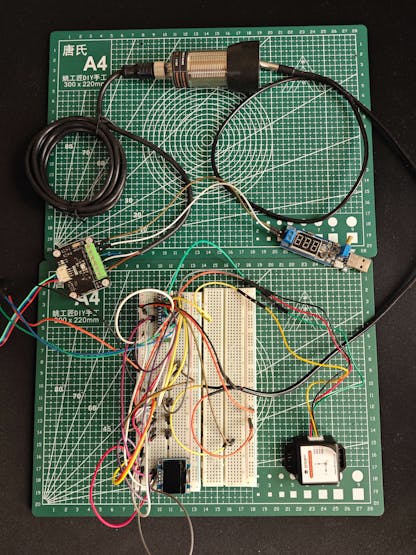

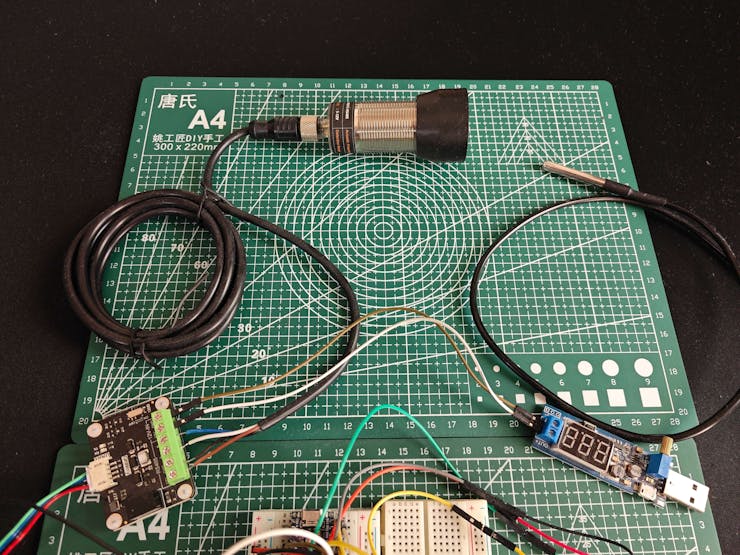

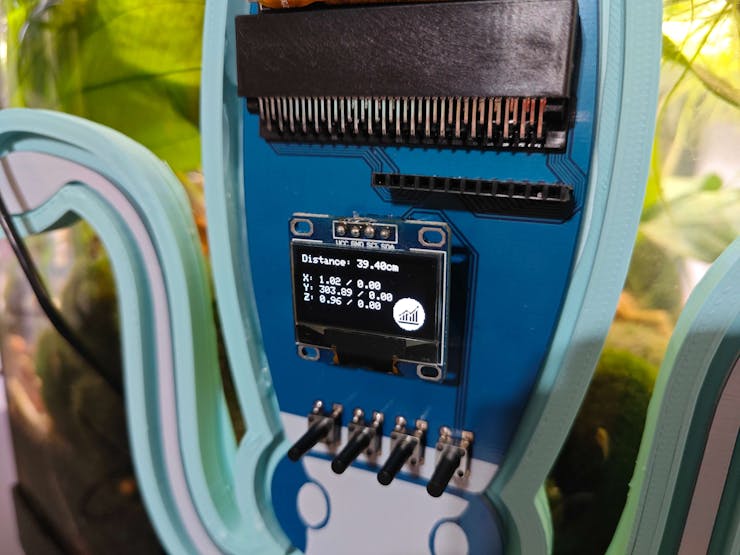

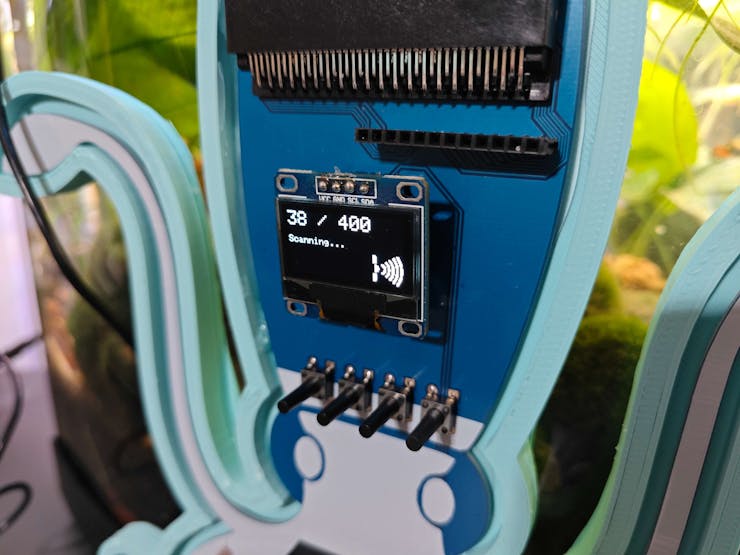

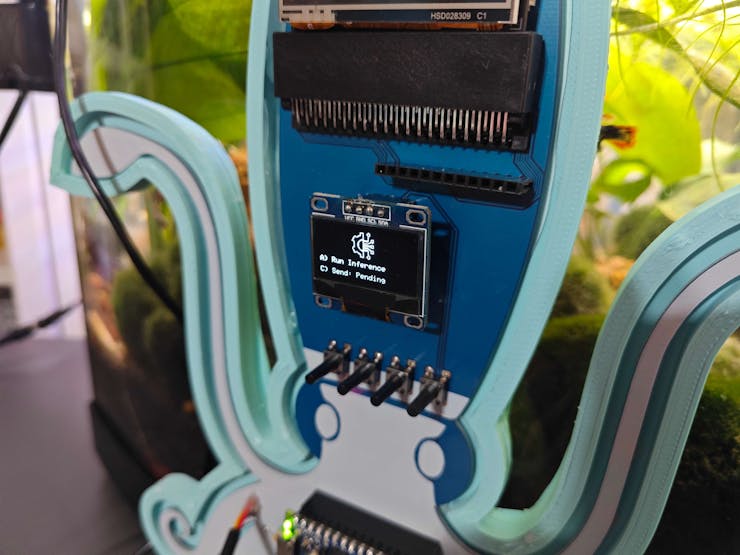

Since Nano ESP32 is a brand-new and high-performance Arduino IoT development board providing a u-blox® NORA-W106 (ESP32-S3) module, 16 MB (128 Mbit) Flash, and an embedded antenna, I decided to utilize Nano ESP32 to collect ultrasonic scan (imaging) information and run my neural network model. Since I needed to utilize submersible equipment to generate precise aquatic ultrasonic scans, I decided to connect a DFRobot URM15 - 75KHZ ultrasonic sensor (via RS485-to-UART adapter module) and a DS18B20 waterproof temperature sensor to Nano ESP32. To produce accurate ultrasonic images from single data points and match the given image shape (20 x 20 — 400 points), I added a DFRobot 6-axis accelerometer. Finally, I connected an SSD1306 OLED display and four control buttons to program a feature-rich user interface.

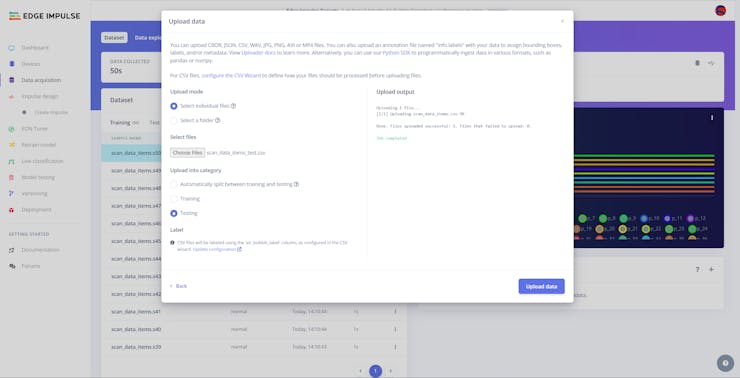

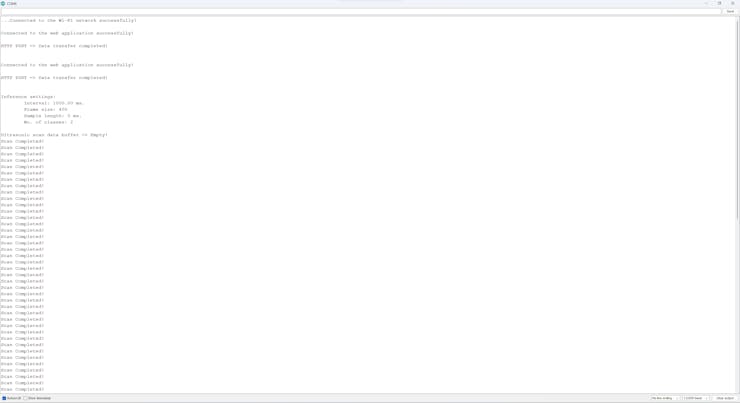

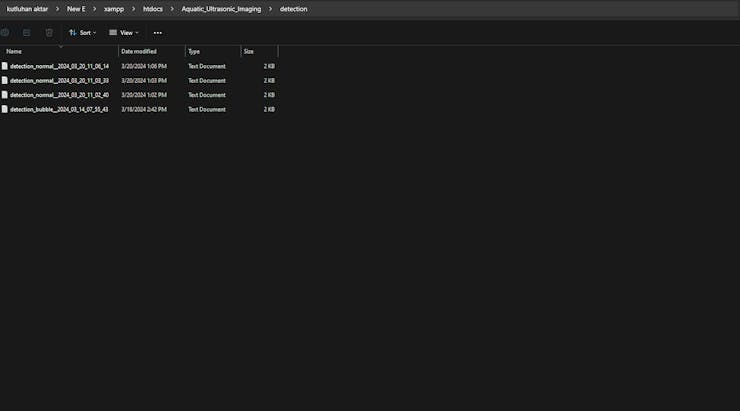

I also employed Nano ESP32 to transfer the produced ultrasonic scan data and the selected air bubble class to a basic web application (developed in PHP) via an HTTP POST request. In this regard, I was able to save each ultrasonic scan buffer with its assigned air bubble class to a separate text (TXT) file and construct my data set effortlessly. I shall clarify the remaining web application features below.

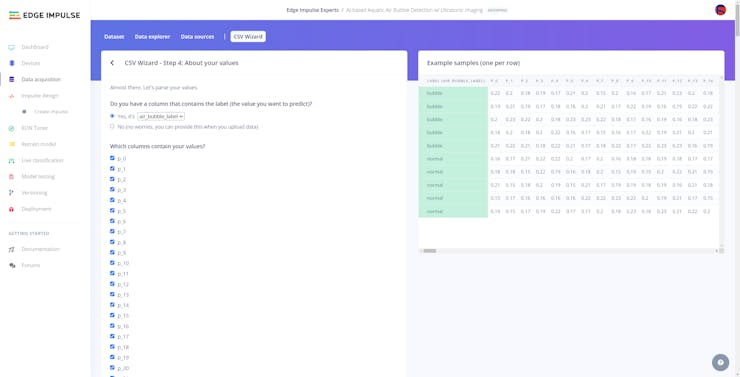

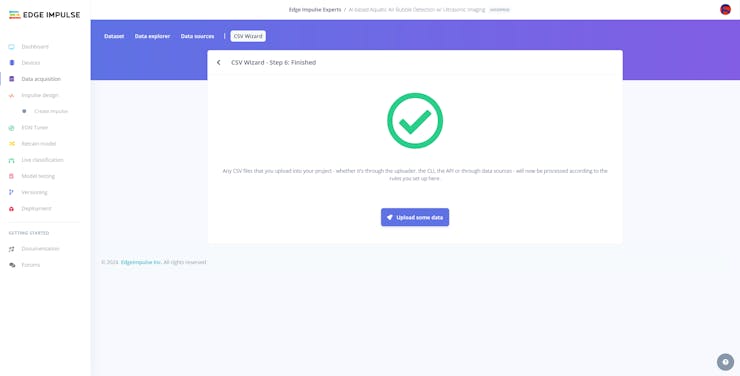

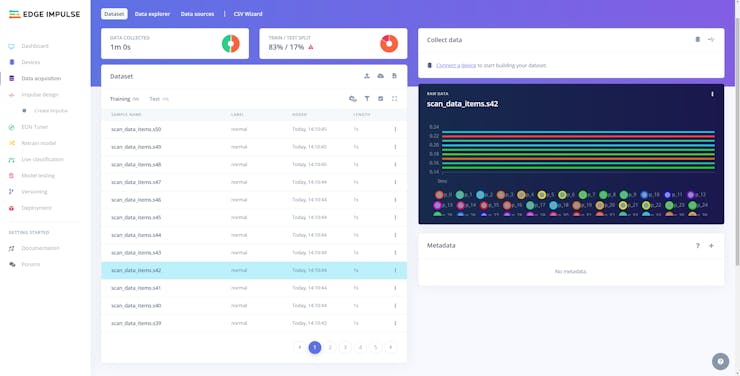

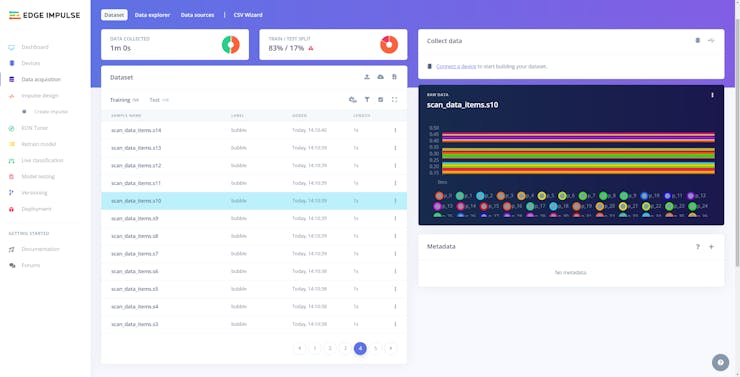

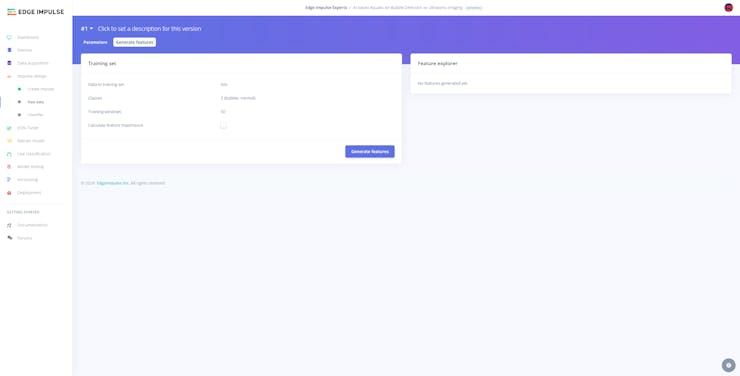

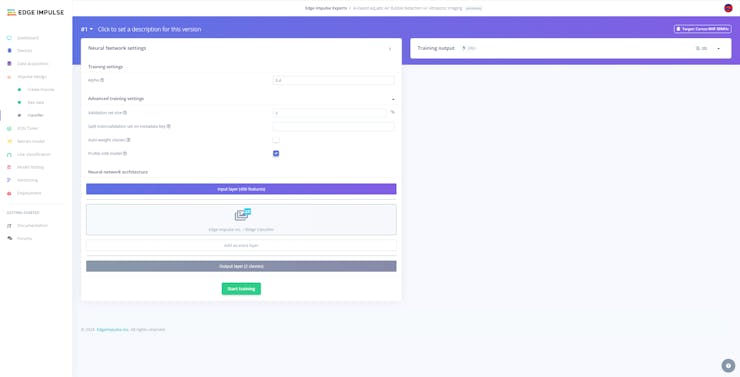

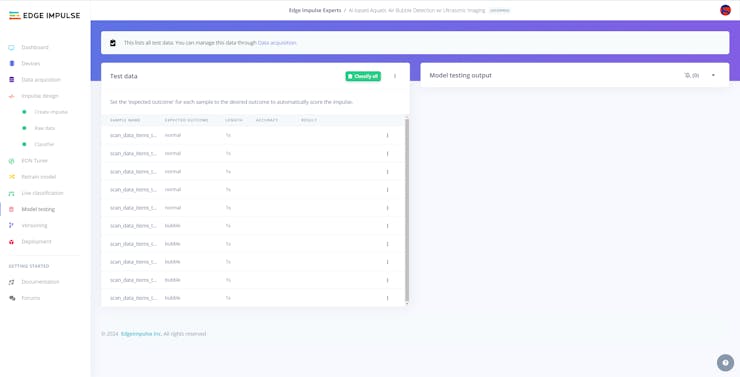

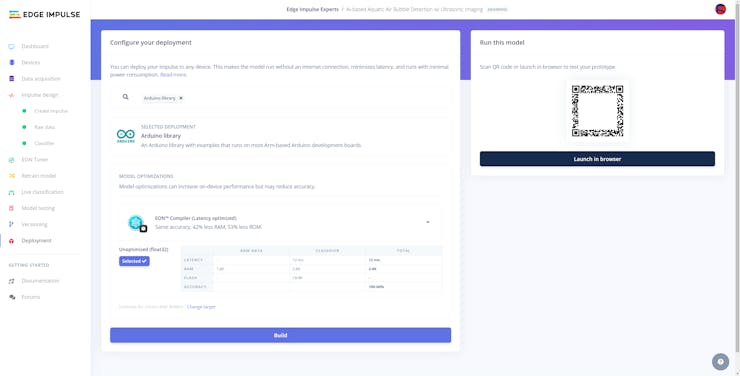

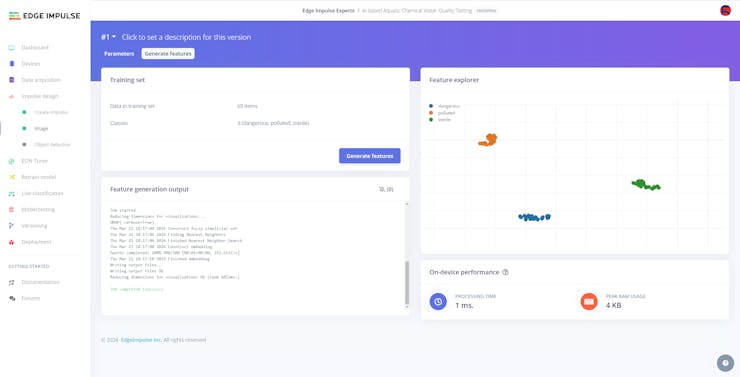

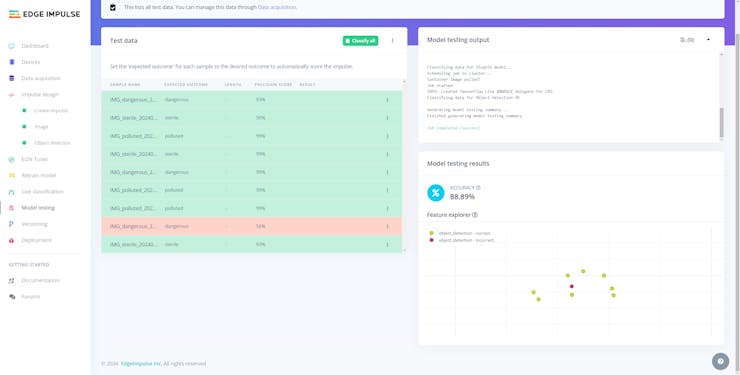

After completing constructing my ultrasonic scan data set, I built my artificial neural network model (ANN) with Edge Impulse to identify noxious air bubbles lurking in the underwater substrate. Considering the unique structure of ultrasonic imaging data, I employed the built-in Ridge classifier as the model classifier, provided by Edge Impulse Enterprise. As a logistic regression method with L2 regularization, the Ridge classification combines conventional classification techniques and the Ridge regression for multi-class classification tasks. Since Edge Impulse is nearly compatible with all microcontrollers and development boards, even for complex Sklearn linear models, I have not encountered any issues while uploading and running my advanced model on Nano ESP32. As labels, I simply differentiate the ultrasonic scan samples depending on the underwater air bubble presence:

- normal

- bubble

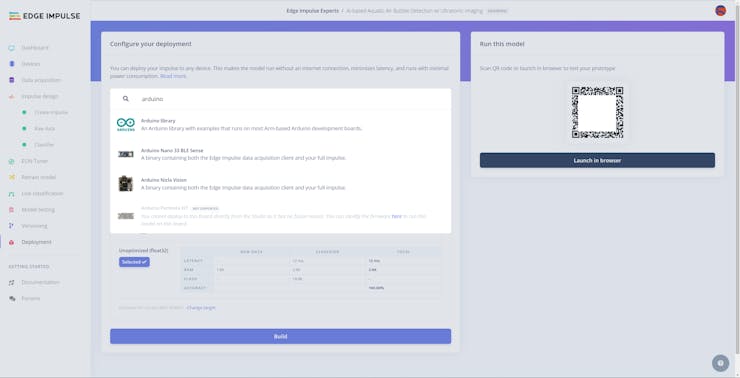

After training and testing my neural network model with the Ridge classifier, I deployed the model as an Arduino library and uploaded it to Nano ESP32. Therefore, the device is capable of identifying underwater air bubbles by running the neural network model without any additional procedures or latency.

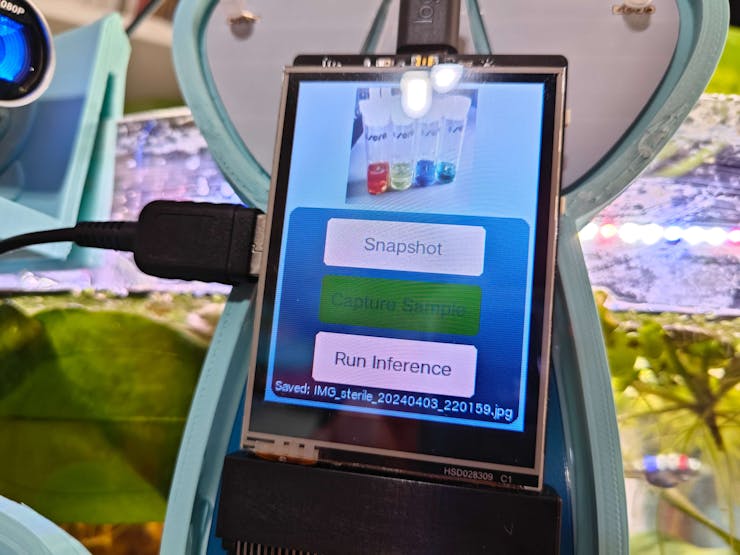

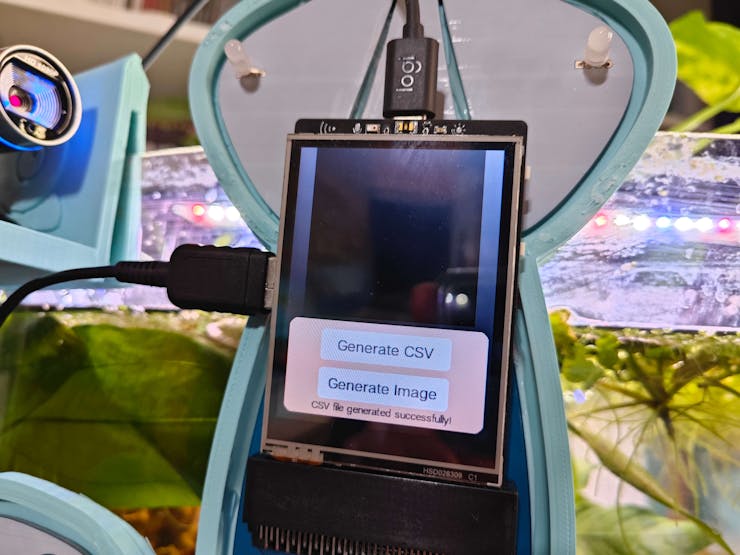

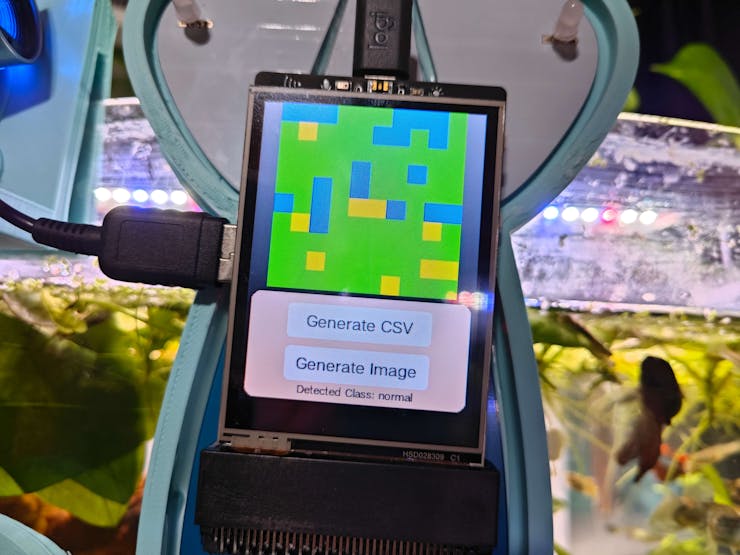

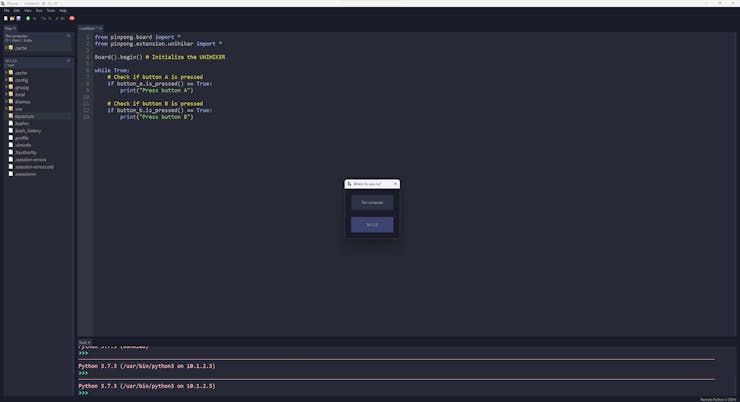

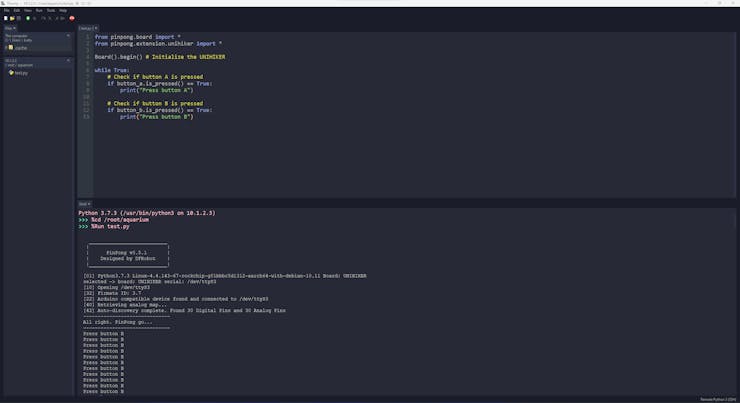

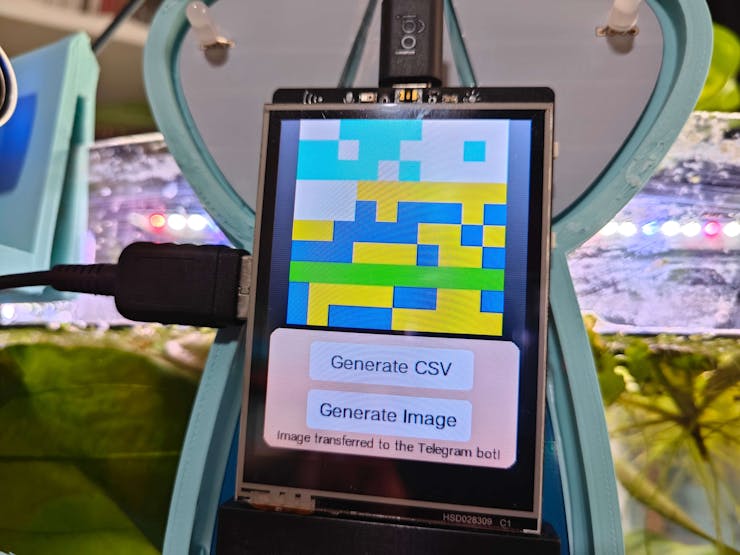

Since UNIHIKER is an exceptionally compact single-board computer providing a built-in touchscreen, integrated Python modules, and micro:bit-compatible edge connector support, I decided to utilize UNIHIKER to collect chemical water quality test result (color-coded) images and run my object detection model. To capture image samples of multiple water quality tests, I connected a high-quality USB webcam to UNIHIKER. Then, I programmed a feature-rich user interface (GUI) and displayed the interactive interface on the built-in touchscreen by employing the integrated Python modules on Thonny.

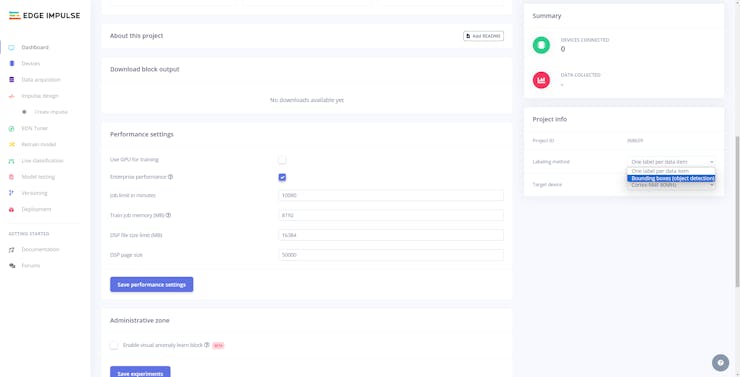

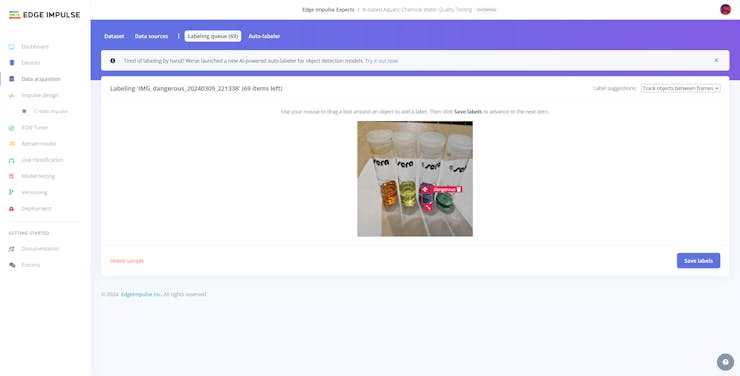

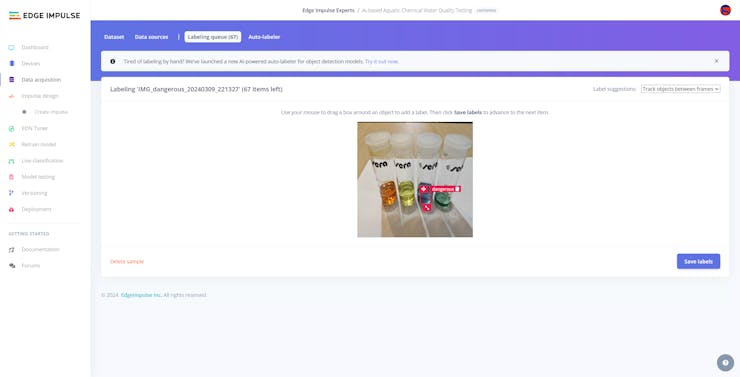

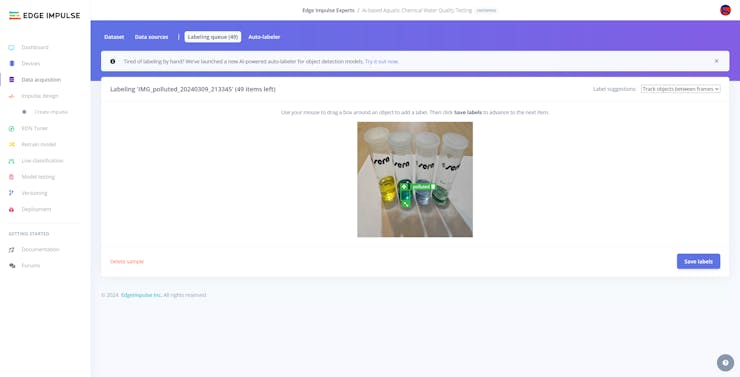

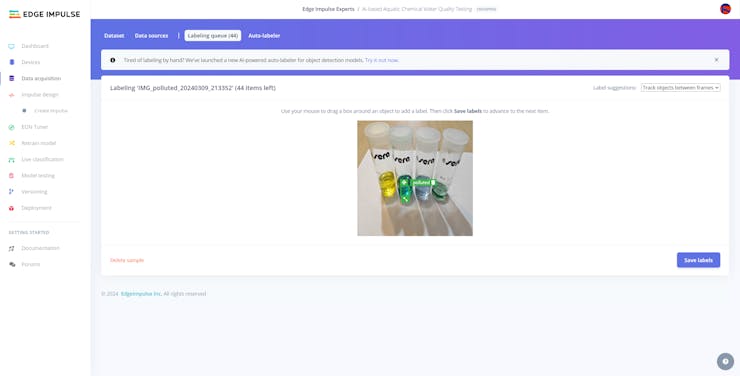

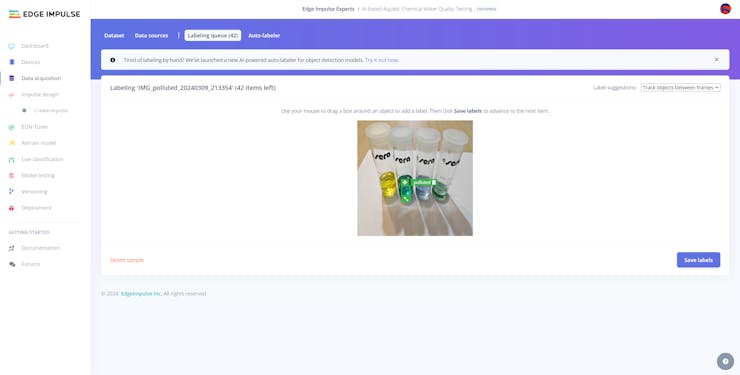

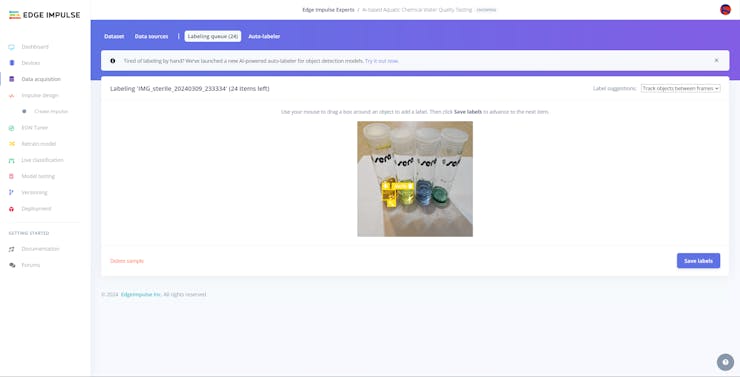

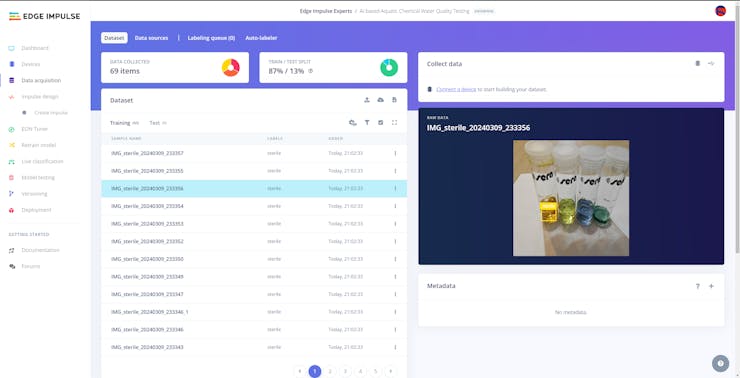

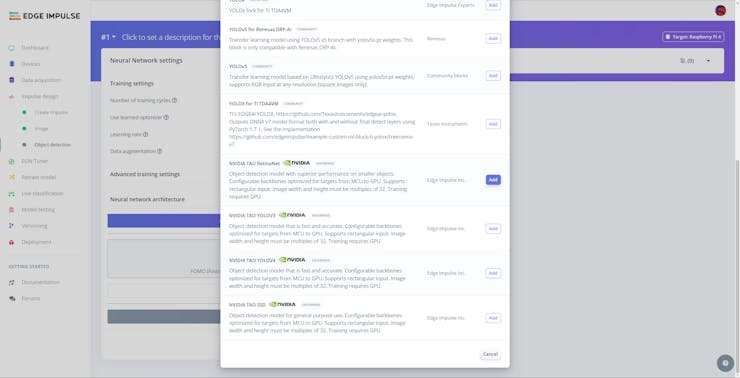

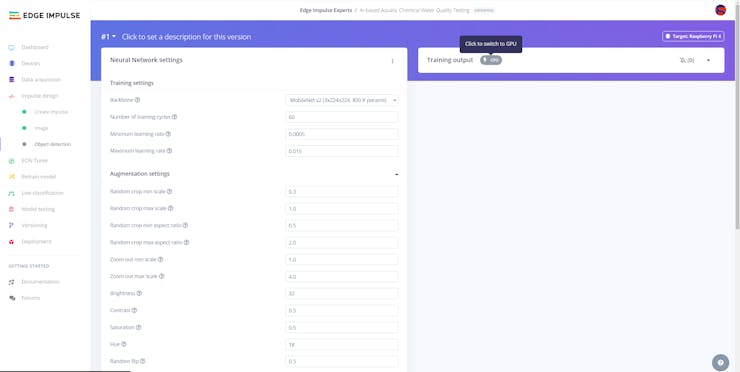

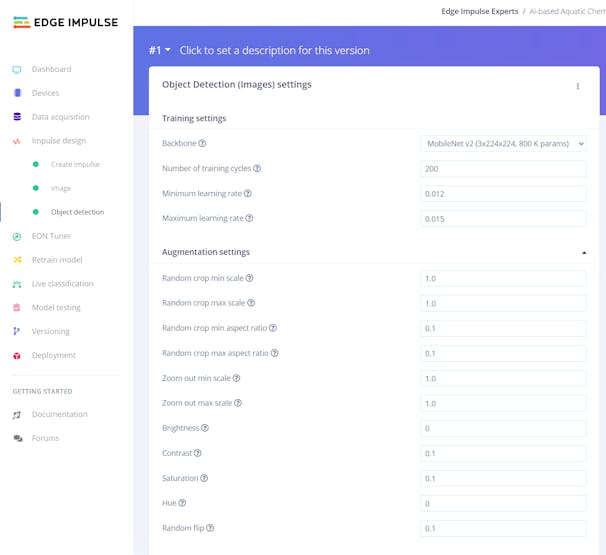

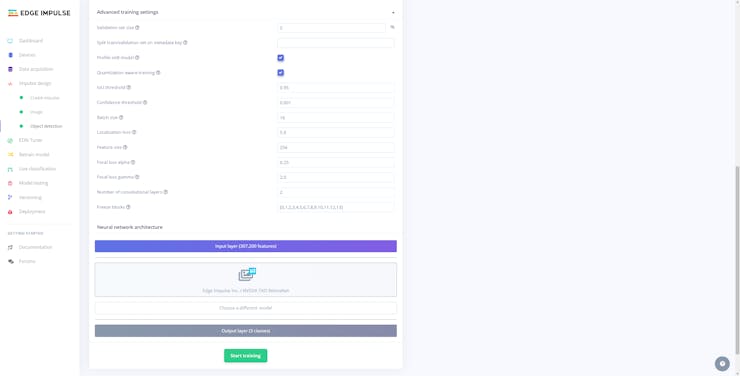

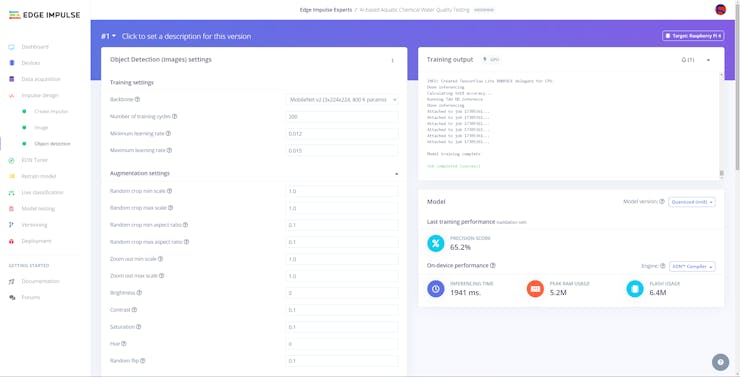

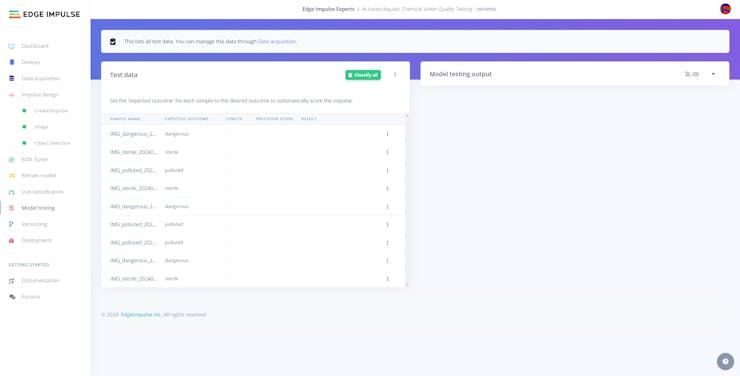

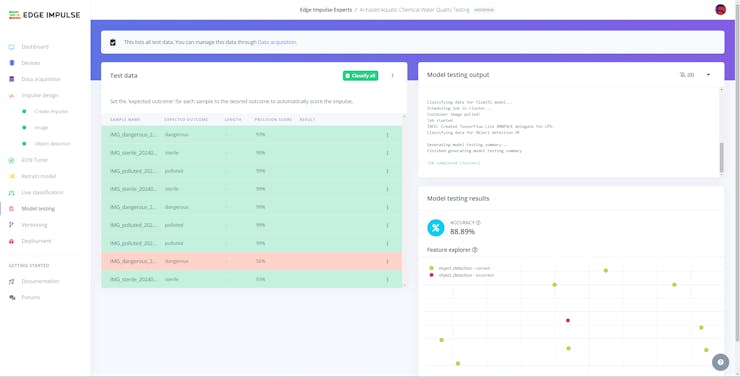

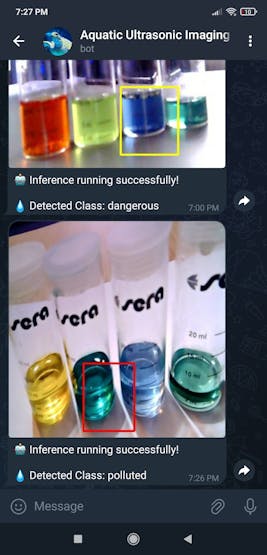

After completing constructing my image data set, I built my object detection model with Edge Impulse to assess water pollution levels based on the applied chemical water quality tests. Since detecting water pollution levels based on color-coded chemical water quality tests is a complicated task, I decided to employ a highly advanced machine learning algorithm from the NVIDIA TAO Toolkit fully supported by Edge Impulse Enterprise — RetinaNet (which is an exceptional algorithm for detecting smaller objects). Since Edge Impulse Enterprise provides configurable backbones for RetinaNet and is compatible with nearly every development board, I have not encountered any issues while uploading and running my NVIDIA TAO RetinaNet object detection model on UNIHIKER. As labels, I utilized empirically assigned pollution levels while observing chemical water tests:

- sterile

- dangerous

- polluted

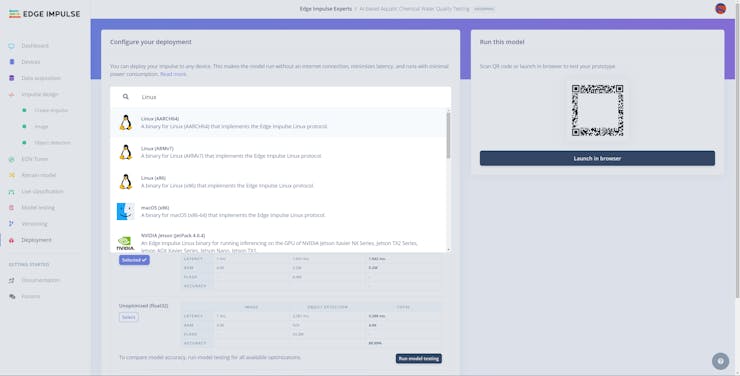

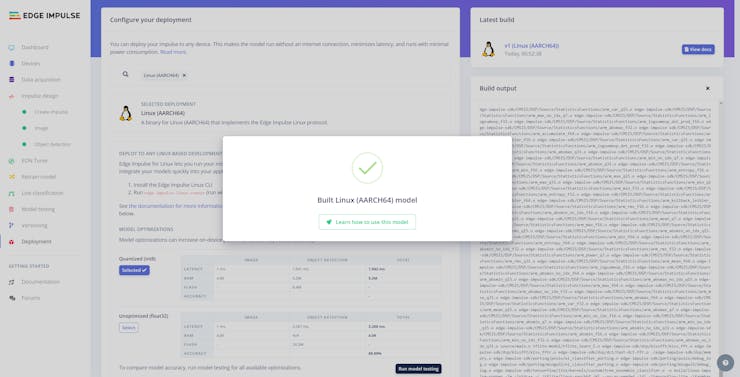

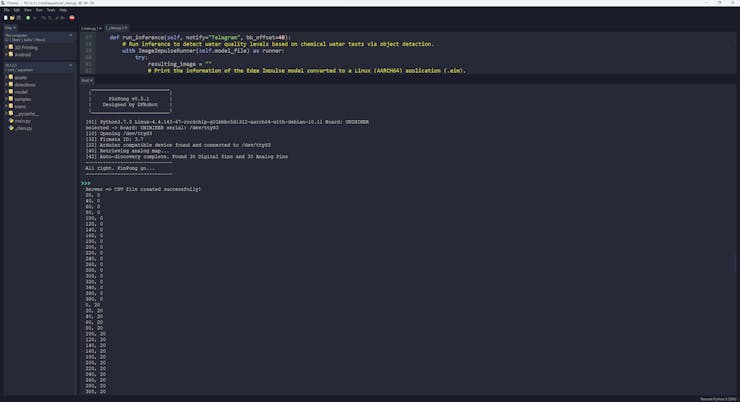

After training and testing my RetinaNet object detection model, I deployed the model as a Linux (AARCH64) application (.eim) and uploaded it to UNIHIKER. Thus, the device is capable of assessing water pollution levels based on the applied chemical water quality tests by running the object detection model independently without any additional procedures, reduced accuracy, or latency.

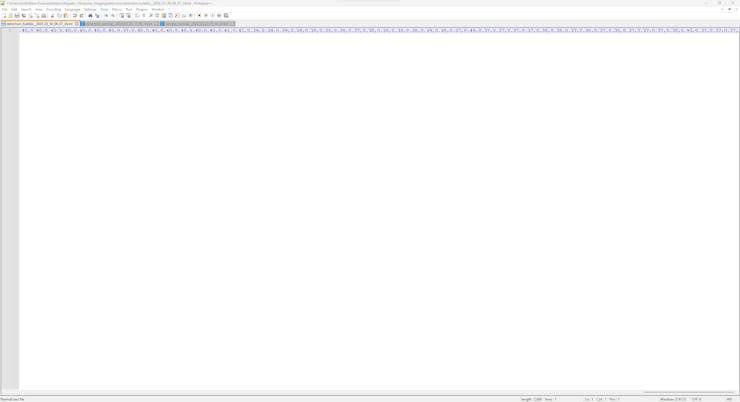

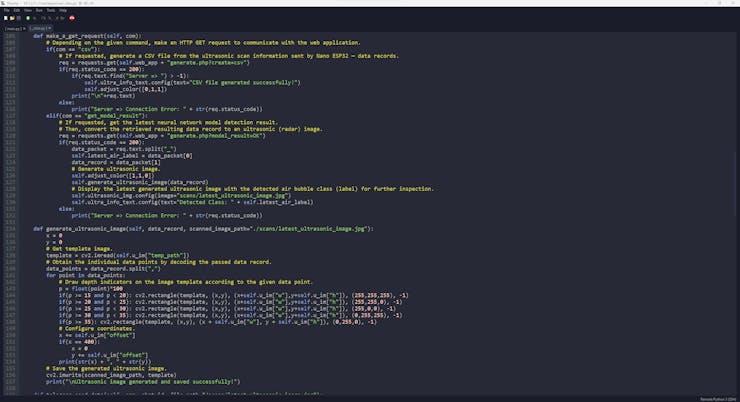

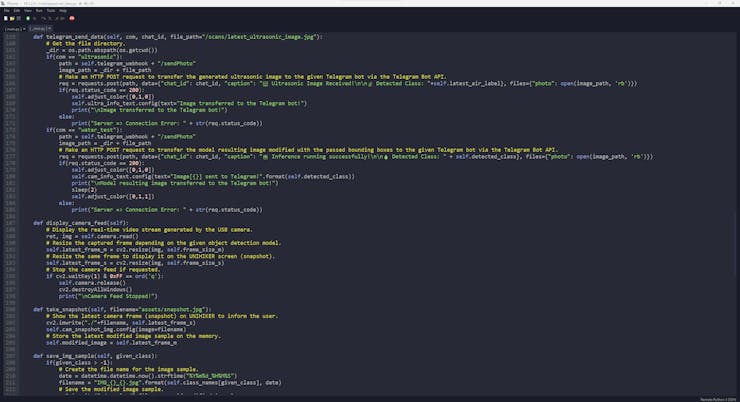

Even though this underwater air bubble and water pollution detection device is composed of two separate development boards, I focused on building full-fledged AIoT features with seamless integration and enabling the user to access the interconnected device features within feature-rich and easy-to-use interfaces. Therefore, I decided to develop a versatile web application from scratch in order to obtain the generated ultrasonic scan buffer (20 x 20 — 400 data points) with the selected air bubble class via an HTTP POST request from Nano ESP32 and save the received information as text (TXT) file. Furthermore, similar to the ultrasonic scan samples, the web application can save model detection results — buffer passed to the neural network model and the detected label — as text files in a separate folder.

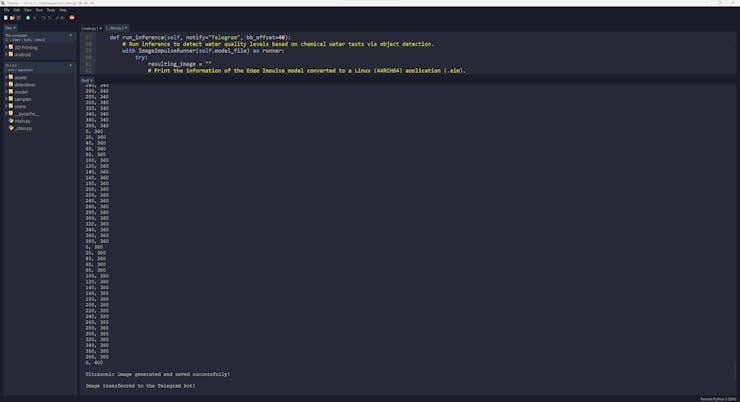

Then, I employed the web application to communicate with UNIHIKER to generate a pre-formatted CSV file from the stored sample text files (ultrasonic scan data records) and transfer the latest neural network model detection result (ultrasonic scan buffer and the detected label) via an HTTP GET request.

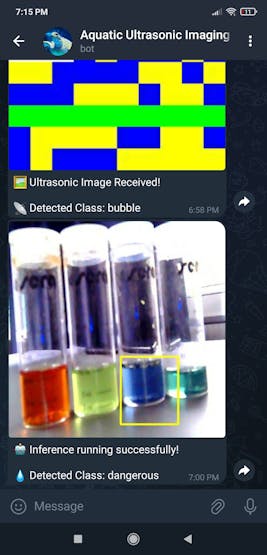

As mentioned repeatedly, each generated ultrasonic scan buffer provides 400 data points as a 20 x 20 ultrasonic image despite the fact that Nano ESP32 cannot utilize the given buffer to produce an ultrasonic image after running the neural network model with the Ridge classifier. Therefore, after receiving the latest model detection result via the web application, I employed UNIKIHER to modify a template image (black square) via the built-in OpenCV functions to convert the given ultrasonic scan buffer to a JPG file and save the modified image to visualize the latest aquatic ultrasonic scan with thoroughly encoded pixels.

Since the RetinaNet object detection model provides accurate bounding box measurements, I also utilized UNIHIKER to modify the resulting images to draw the associated bounding boxes and save the modified resulting images as JPG files for further inspection.

After conducting experiments with both models and producing significant results, I decided to set up a basic Telegram bot to inform the user of the latest model detection results by transferring the latest generated ultrasonic image with the detected air bubble class and the latest modified resulting image of the object detection model. Since Telegram is a cross-platform cloud-based messaging service with a fully supported HTTP-based Bot API, Telegram bots can receive images from the local storage directly without requiring a hosting service. Thus, I was able to transfer the modified images (of both models) from UNIHIKER to the Telegram bot without establishing an SSL connection.

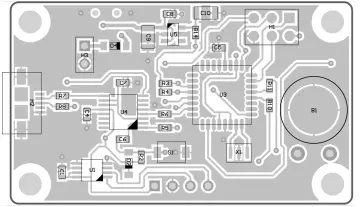

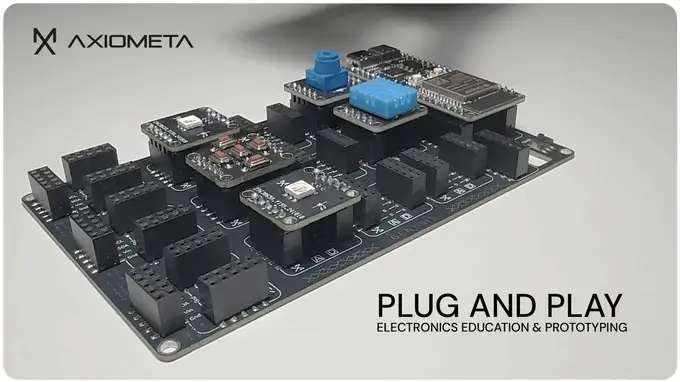

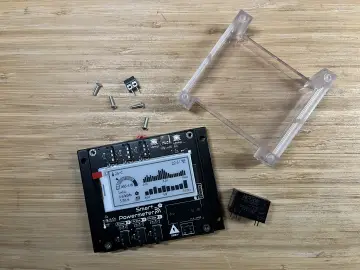

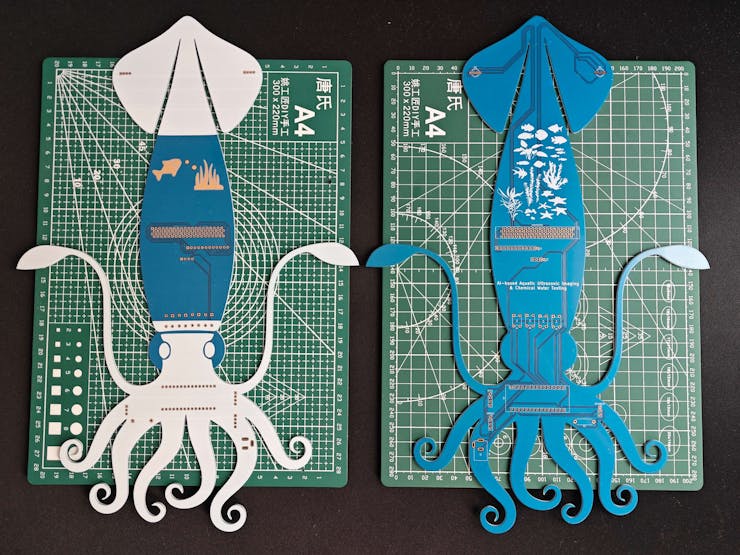

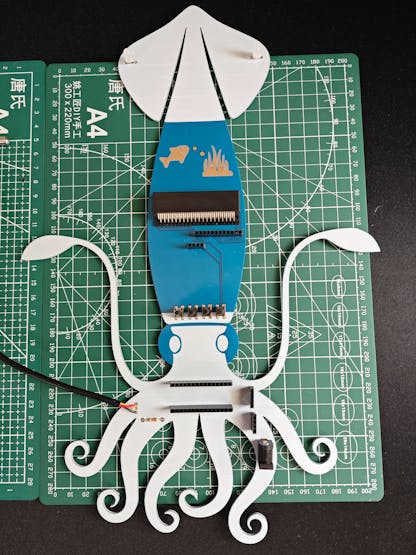

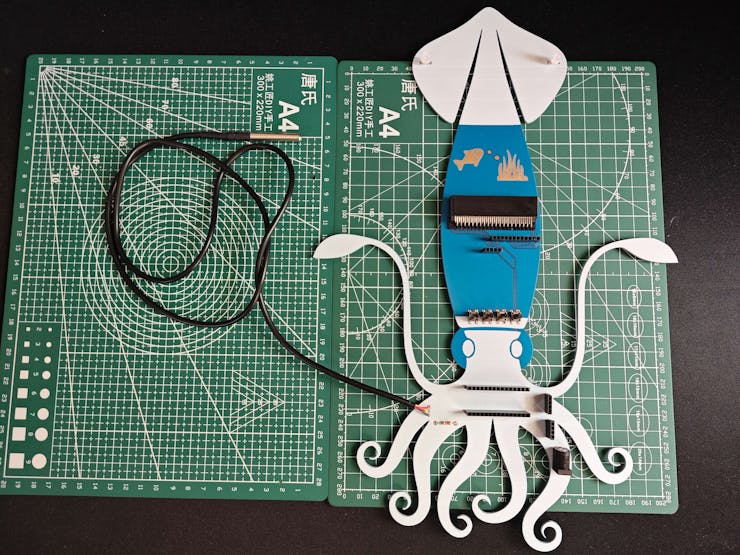

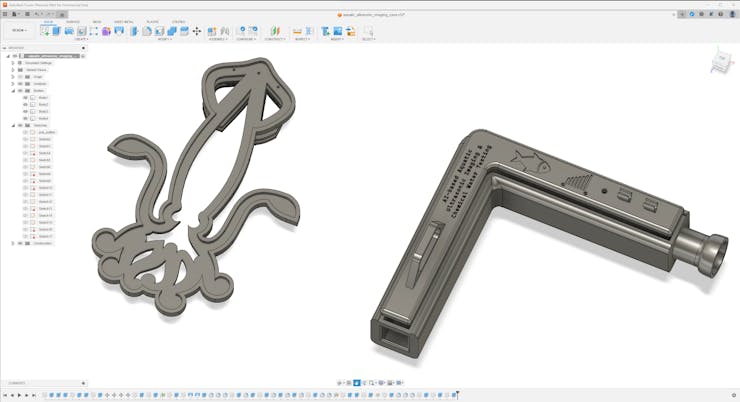

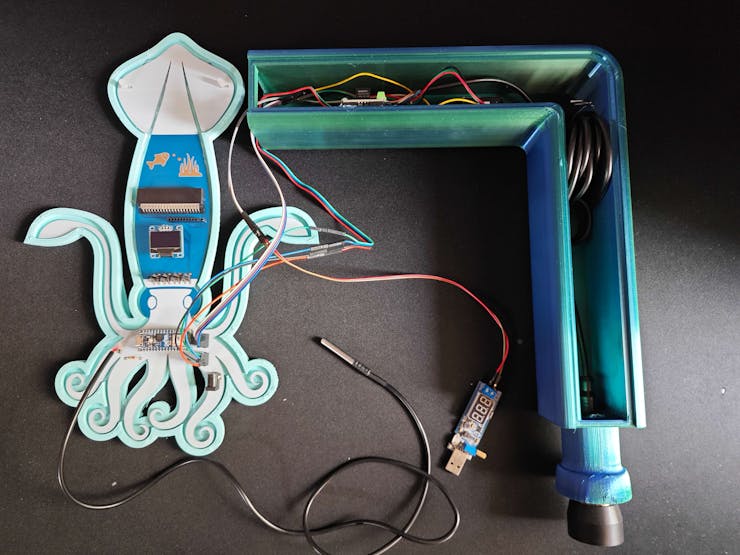

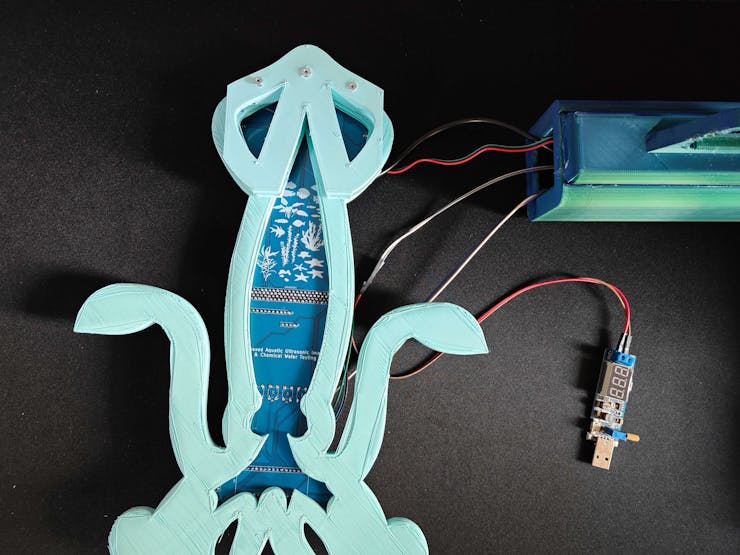

Considering the tricky operating conditions near an aquaculture facility and providing a single-unit device structure, I decided to design a unique PCB after testing all connections of the prototype via breadboards. Since I wanted my PCB design to represent the enchanting and mystique underwater aquatic life, I decided to design a Squid-inspired PCB. Thanks to the micro:bit-compatible edge connector, I was able to attach all components and development boards to the PCB smoothly.

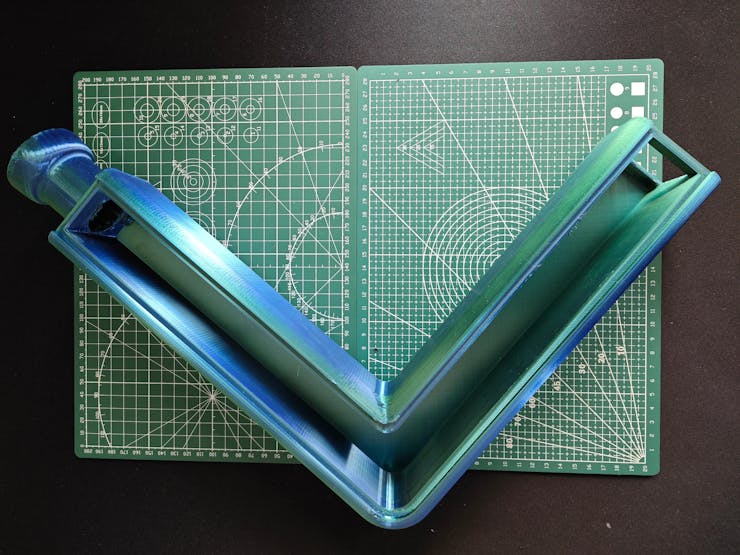

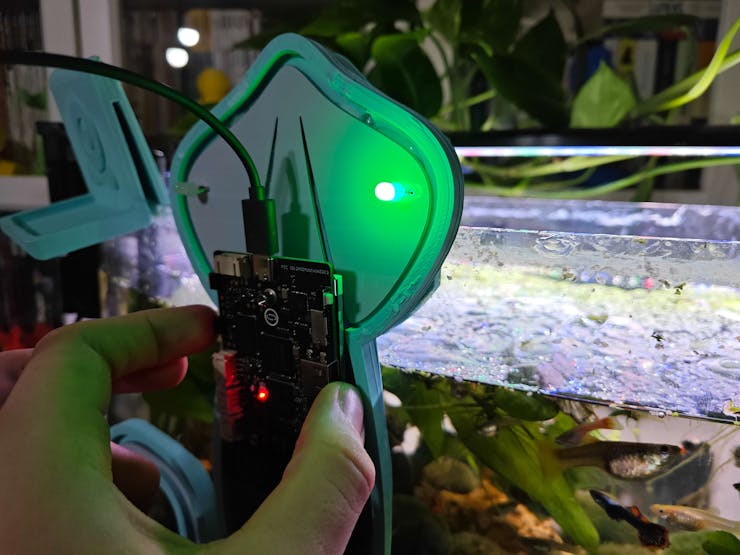

Finally, to make the device as robust and compact as possible, I designed a complementing Aquatic-themed case (3D printable) with a modular holder encasing the PCB outline, a hang-on aquarium connector mountable to the PCB holder, a hang-on camera holder to place the high-quality USB webcam when standing idle, and a removable top cover allowing the user to attach sensors to the assigned slots. The semicircular-shaped mounting brackets are specifically designed to contain the waterproof temperature sensor.

So, this is my project in a nutshell 😃

In the following steps, you can find more detailed information on coding, collecting ultrasonic scan information, capturing chemical water quality test result images, building neural network and object detection models with Edge Impulse Enterprise, running the trained models on Nano ESP32 and UNIHIKER, developing a versatile web application, and setting up a Telegram bot to inform the user via push notifications.

Step 1: Designing and soldering the Squid-inspired PCB

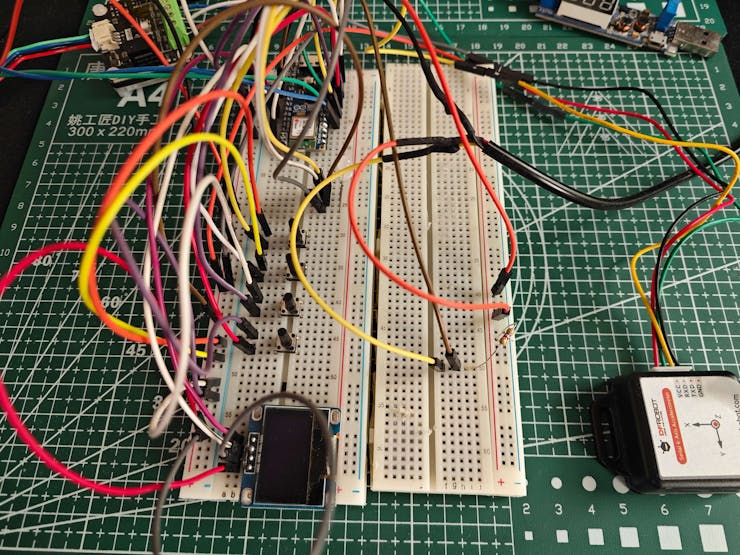

Before prototyping my Squid-inspired PCB design, I inspected the detailed pin reference of Nano ESP32, the micro:bit connector-based UNIHIKER pinout, and the supported transmission protocols of the measurement sensors. Then, I checked the wireless (Wi-Fi) communication quality between Nano ESP32, UNIHIKER, and the web application while transferring and receiving data packets.

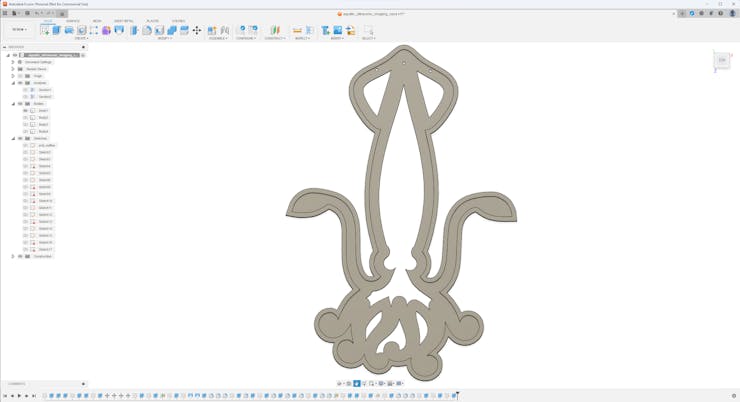

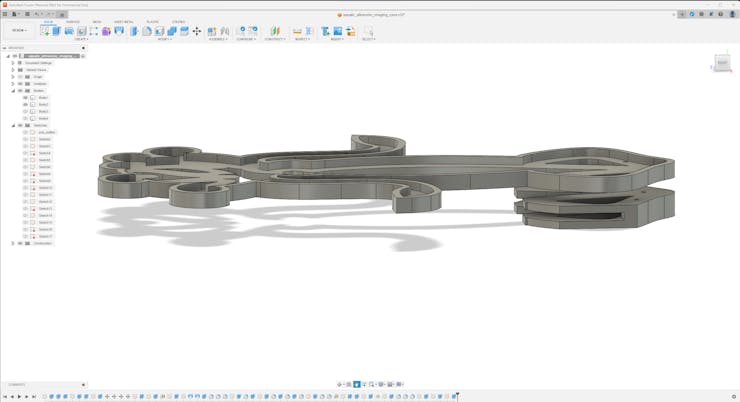

Then, I designed my Squid-inspired PCB by utilizing Autodesk Fusion 360 and KiCad. Since I wanted to design a distinctive 3D-printed hang-on holder to simplify the PCB placement on the simulated fish farm (aquarium), I created the PCB outline on Fusion 360 and then imported the outline file (DXF) to KiCad. As mentioned earlier, I decided to utilize squid as the fulcrum of my PCB design since I wanted my device to resemble the enchanting underwater atmosphere.

To replicate this air bubble and water pollution detection device, you can download the Gerber file below and order my PCB design from ELECROW directly.

Normally, it would not be possible to embed most of the commercial single-board computers directly into a PCB without applying arduous disassembly methods. Nevertheless, UNIHIKER provides a micro:bit-compatible connector to access the GPIO interface of the microcontroller coprocessor (RISC-V). Therefore, I was able to embed UNIHIKER as the centerpiece of my PCB by utilizing a micro:bit-compatible edge connector from Kitronik.

If you want to add the Kitronik edge connector to your PCB designs, you can inspect its KiCad component library and footprint.

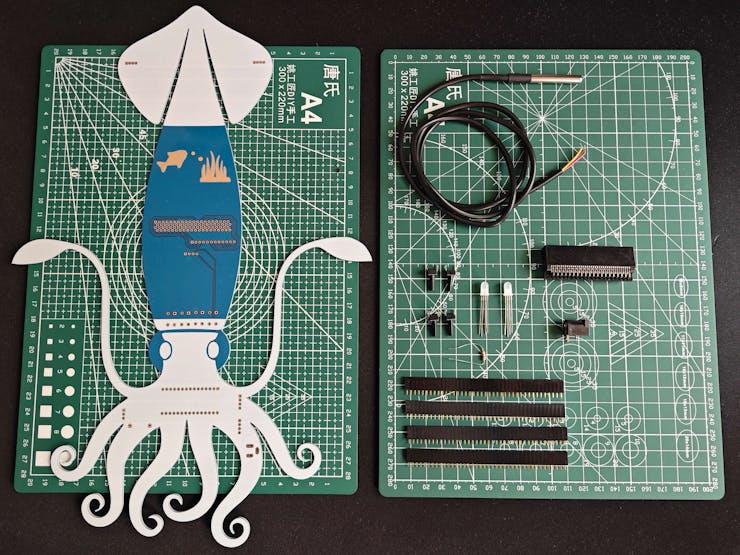

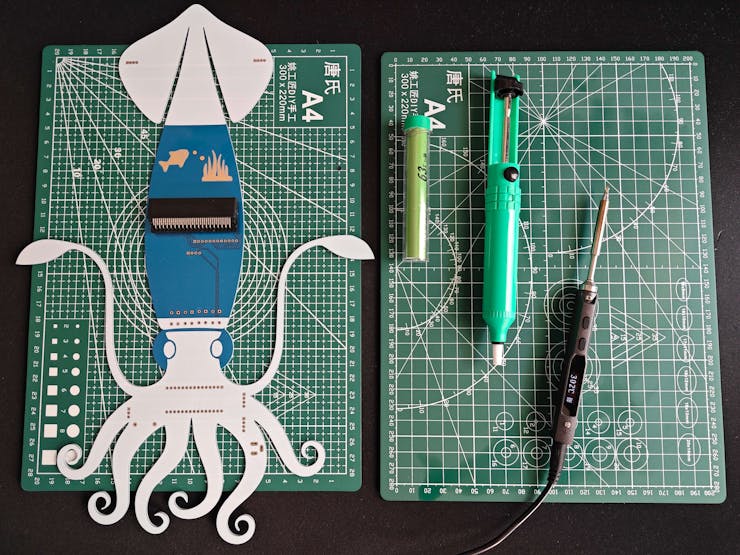

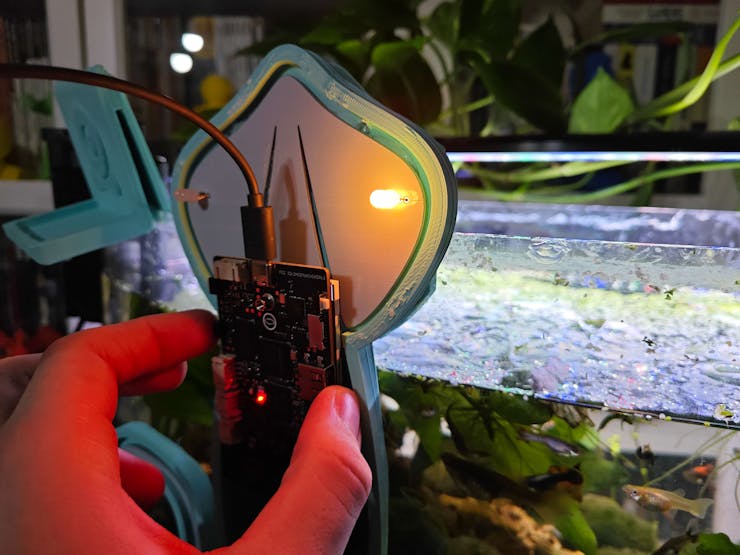

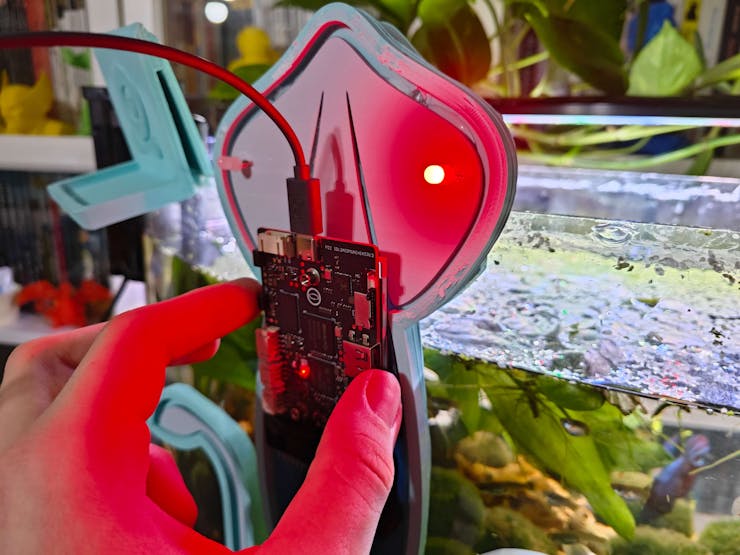

By utilizing a TS100 soldering iron, I attached headers (female), a DS18B20 waterproof temperature sensor, a Kitronik micro:bit-compatible edge connector, pushbuttons (6x6), 5 mm common anode RGB LEDs, a 4.7K resistor, and a power jack to the Squid PCB.

📌 Component list on the PCB:

A1 (Headers for Arduino Nano ESP32)

UNIHIKER1 (Kitronik micro:bit-compatible Edge Connector)

DS18B20 (DS18B20 Waterproof Temperature Sensor)

URM15 (Headers for RS485-to-UART Signal Adapter Module)

ACC1 (Headers for Serial 6-Axis Accelerometer)

S1 (Headers for SSD1306 OLED Display)

R1 (4.7K Resistor)

K1, K2, K3, K4 (6x6 Pushbutton)

D1, D2 (5 mm Common Anode RGB LED)

J2 (Headers for Available UNIHIKER Pins)

J1 (Power Jack)

Step 1.1: Making connections and adjustments

// Connections

// Arduino Nano ESP32 :

// URM15 - 75KHZ Ultrasonic Sensor via RS485-to-UART Signal Adapter Module

// D3 ------------------------ TX

// D2 ------------------------ RX

// 3.3V ------------------------ +

// GND ------------------------ -

// Serial 6-Axis Accelerometer

// 3.3V ------------------------ VCC

// D5 ------------------------ RXD

// D4 ------------------------ TXD

// GND ------------------------ GND

// DS18B20 Waterproof Temperature Sensor

// A1 ------------------------ Data

// SSD1306 OLED Display (128x64)

// A4 ------------------------ SDA

// A5 ------------------------ SCL

// Control Button (A)

// D6 ------------------------ +

// Control Button (B)

// D7 ------------------------ +

// Control Button (C)

// D8 ------------------------ +

// Control Button (D)

// D9 ------------------------ +

// 5mm Common Anode RGB LED

// D10 ------------------------ R

// D11 ------------------------ G

// D12 ------------------------ B

&

&

&

// Connections

// UNIHIKER :

// 5mm Common Anode RGB LED

// P4 ------------------------ R

// P5 ------------------------ G

// P6 ------------------------ B#️⃣ Although the URM15 is an exceptional ultrasonic ranging sensor providing an IP65 waterproof probe with a measuring range of 30 cm - 500 cm, it does not support direct data transmission and requires the standard Modbus-RTU protocol for stable communication. Thus, I utilized an RS485-to-UART signal adapter module (active-isolated) to obtain the generated ultrasonic distance measurements from the ultrasonic sensor and transfer them to Nano ESP32 via serial communication. Since Nano ESP32 cannot supply the stable 12V required for the URM15 ultrasonic sensor, I connected a USB buck-boost converter board to an external battery to obtain the demanding 12V to power the ultrasonic sensor through the signal adapter module.

#️⃣ Since the URM15 ultrasonic sensor supports the external temperature compensation to obviate the undulating ambient temperature effect, I utilized a DS18B20 waterproof temperature sensor to tune the ultrasonic sensor. As shown in the schematic below, before connecting the DS18B20 waterproof temperature sensor to Nano ESP32, I attached a 4.7K resistor as a pull-up from the DATA line to the VCC line of the sensor to generate accurate temperature measurements.

#️⃣ To detect the movement of the ultrasonic sensor probe underwater while collecting data, I utilized a 6-axis accelerometer supporting UART communication. Since I employed Nano ESP32 to pass the collected data buffers directly to the web application, I did not need to connect an external storage module such as a microSD card module.

#️⃣ To provide the user with a feature-rich interface, I connected an SSD1306 OLED display and four control buttons to Nano ESP32. I also added an RGB LED to inform the user of the device status while performing operations related to Nano ESP32.

#️⃣ Since UNIHIKER (RK3308 Arm 64-bit) is an outstandingly compact single-board computer providing a USB Type-A connector for peripherals, I was able to connect a high-quality USB webcam (PK-910H) to capture and save image samples effortlessly.

#️⃣ As explained earlier, UNIHIKER comes with a micro:bit-compatible connector to access the GPIO interface of the microcontroller coprocessor (RISC-V). I utilized the Kitronik edge connector to access the GPIO pins and adjust the secondary RGB LED to inform the user of the device status while performing operations related to UNIHIKER. In this regard, I was able to embed UNIHIKER into the Squid PCB as the centerpiece to build a single-unit device.

#️⃣ Before embedding UNIHIKER, I tested the micro:bit-compatible GPIO interface by utilizing a soldered Kitronik breakout board with the edge connector.

#️⃣ After completing soldering and adjustments, I attached all remaining components to the Squid PCB via the female headers.

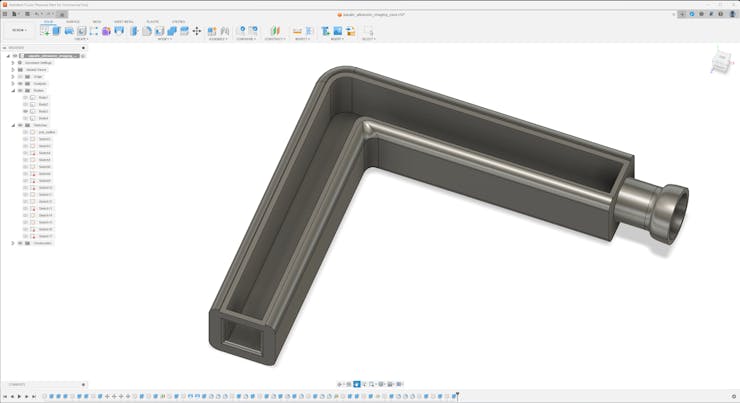

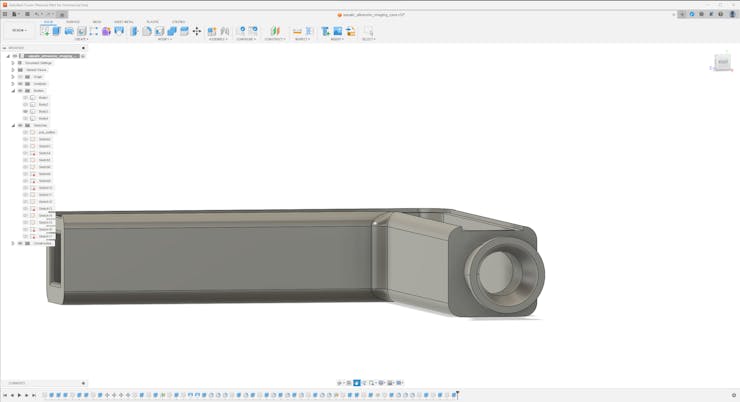

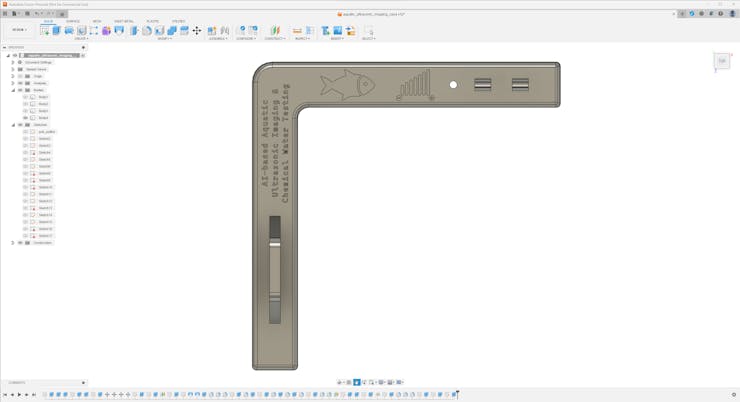

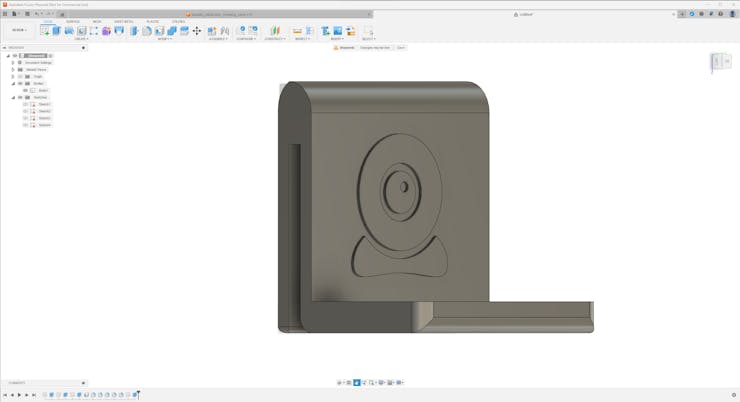

Step 2: Designing and printing the Aquatic-themed case

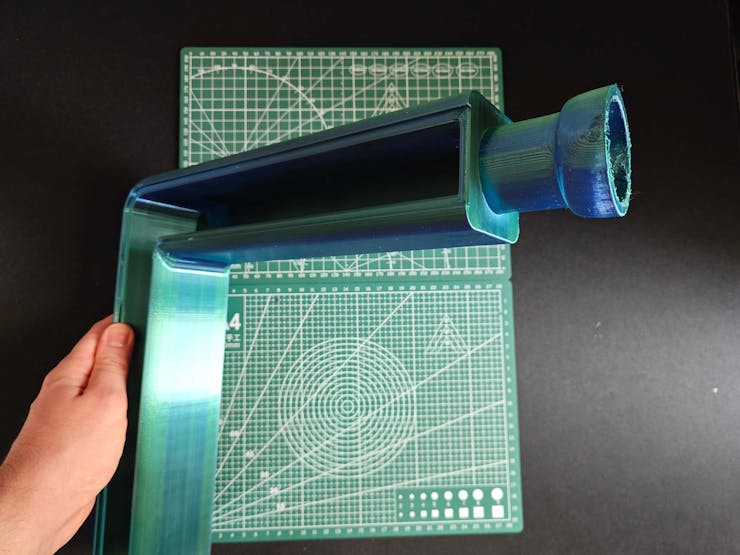

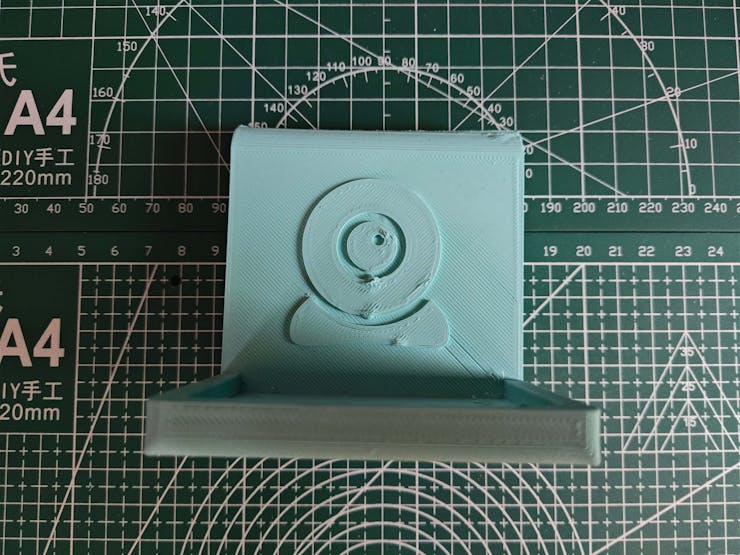

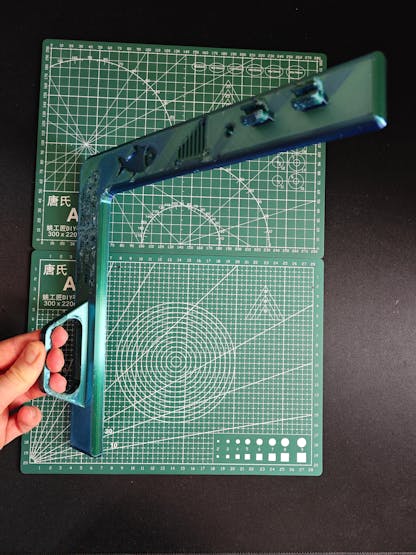

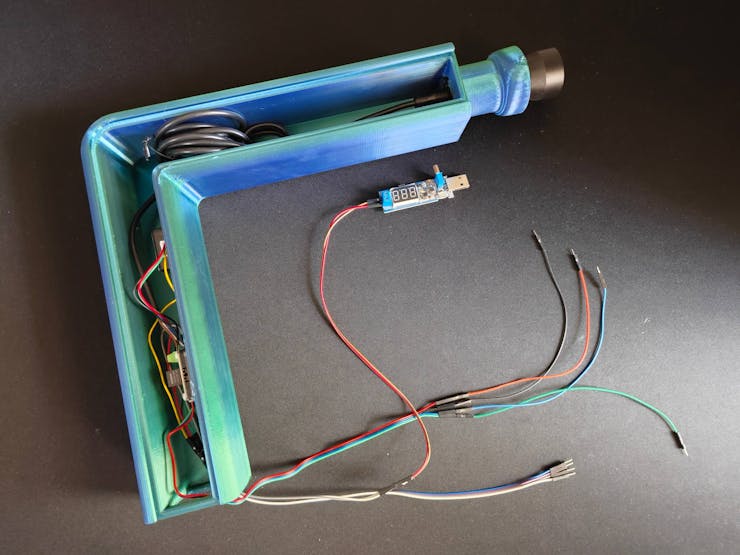

Since I focused on building a feature-rich and accessible AI-powered device that identifies noxious underwater air bubbles via aquatic ultrasonic scans and evaluates water pollution based on chemical water quality tests via object detection so as to inform the user via Telegram push notifications, I decided to design a robust and modular case allowing the user to hang the Squid PCB on the aquarium, place the high-quality USB webcam when standing idle, and position the ultrasonic sensor effortlessly while scanning underwater substrate. To avoid overexposure to water and prevent open wire connections from short circuits, I added a removable top cover mountable to the main case via snap-fit joints. The semicircular-shaped mounting brackets on the top cover let the user attach the DS18B20 waterproof temperature sensor effortlessly. Then, I designed a unique PCB holder encasing the Squid PCB outline and a hang-on aquarium connector mountable to the PCB holder via M3 screws and nuts. To place the high-quality USB webcam when standing idle, I also designed a hang-on camera holder attachable to the side of the aquarium. Furthermore, I decided to emboss aquatic life with sound-based graphic icons on the removable top cover and the camera symbol on the camera holder to highlight the qualifications of this AI-powered underwater air bubble detection device.

Since I needed to position the URM15 ultrasonic sensor accurately while scanning the underwater substrate and generating data buffers, I added a special cylindrical slot to the end point of the L-shaped main case in order to fasten the ultrasonic sensor seamlessly.

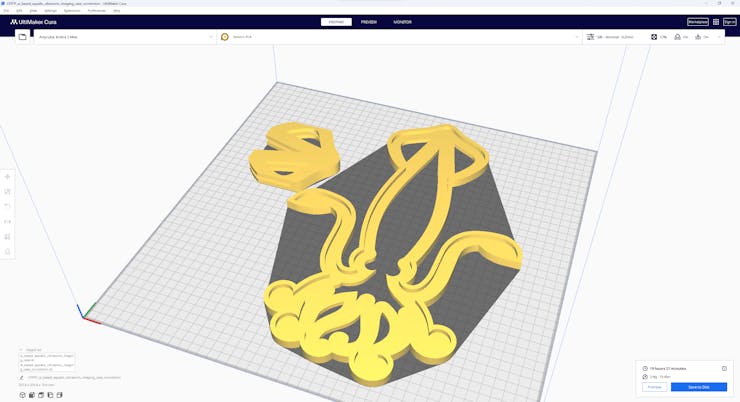

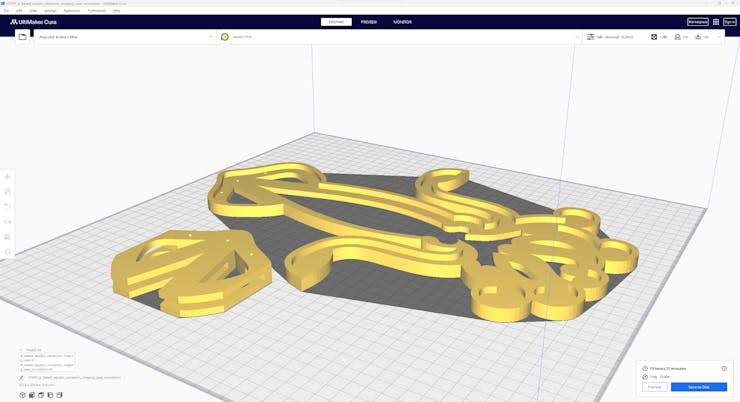

I designed the L-shaped main case, the removable top cover, the Squid PCB holder, the hang-on aquarium connector of the PCB holder, and the hang-on camera holder in Autodesk Fusion 360. You can download their STL files below.

Then, I sliced all 3D models (STL files) in Ultimaker Cura.

Since I wanted to create a mystique watery structure for the device case and apply a unique underwater theme representing the mesmerizing aquatic life, I utilized these PLA filaments:

- ePLA-Silk Magic Green-Blue (main case and top cover)

- ePLA-Matte Light Blue (PCB holder and hang-on connectors)

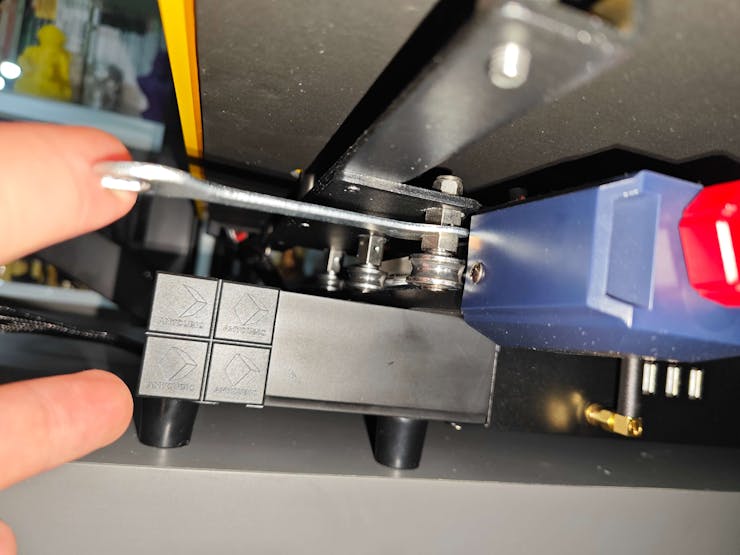

Finally, I printed all parts (models) with my brand-new Anycubic Kobra 2 Max 3D Printer.

Since Anycubic Kobra 2 Max is budget-friendly and specifically designed for high-speed printing with a gigantic build volume, I highly recommend Anycubic Kobra 2 Max if you are a maker or hobbyist needing to print large prints without compartmentalizing your design and losing structural integrity while working on multiple prototypes before finalizing a complex project.

Thanks to its upgraded direct extruder and vibration compensation features, Anycubic Kobra 2 Max provides 300 mm/s recommended print speed (up to 500 mm/s) and enhanced layer quality. Also, it provides a high-speed optimized cooling system, reducing visible layer lines and complementing the fast printing experience. Since the Z-axis has dual-motors and dual-support rods, it prevents vibration from affecting layer smoothness and integrity, even at higher print speeds.

Furthermore, Anycubic Kobra 2 Max provides a magnetic suction platform on the heated bed for the scratch-resistant PEI spring steel build plate, allowing the user to remove prints without any struggle, even for larger prints up to 420x420x500 mm. Most importantly, you can level the bed automatically via its user-friendly LeviQ 2.0 automatic leveling system and custom Z-axis compensation. Also, it has a smart filament runout sensor and supports Anycubic APP for remote control and management.

#️⃣ First of all, remove all fixing plates. Then, install the gantry frame and support rods.

#️⃣ Install the print head and adjust the X-axis belt tensioner. Then, install the touchscreen and the filament runout sensor.

#️⃣ Connect the stepper, switch, screen, and print head cables. Then, attach the filament tube and use cable ties to secure the cables properly.

#️⃣ If the print head or bed is shaking, adjust the hexagonal isolation columns underneath them.

#️⃣ To avoid software-related print failures, update the device firmware manually via USB or directly over Wi-Fi.

I encountered some errors due to Cura configurations before the official 2.3.6 firmware.

#️⃣ After the firmware upgrade, go to Settings ➡ More Settings ➡ Guide so as to initiate the LeviQ 2.0 automatic bed leveling system and configure vibration calibration.

#️⃣ Finally, fix the filament tube with the cable clips, install the filament holder, and insert the filament into the extruder.

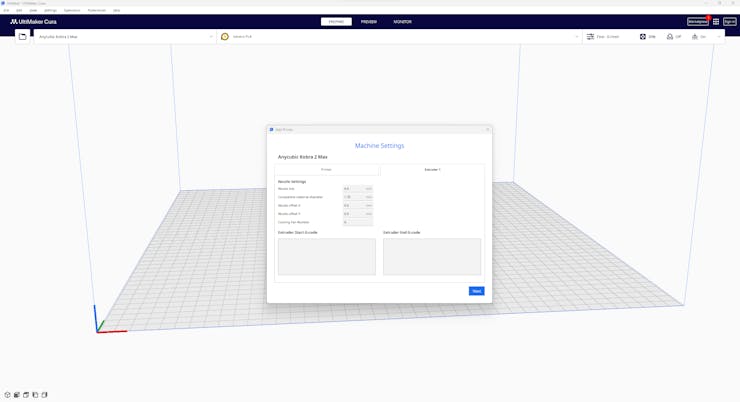

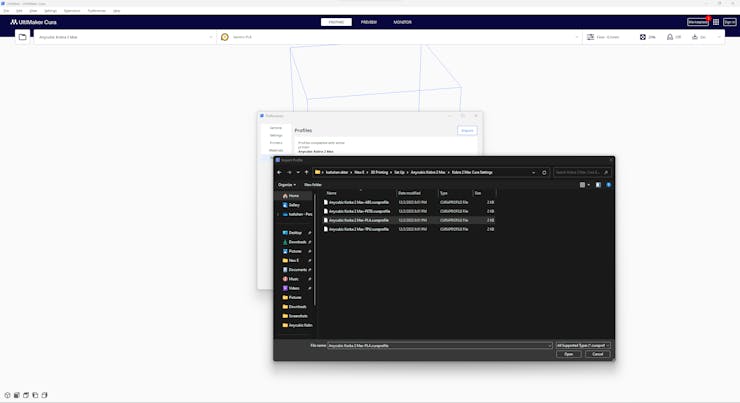

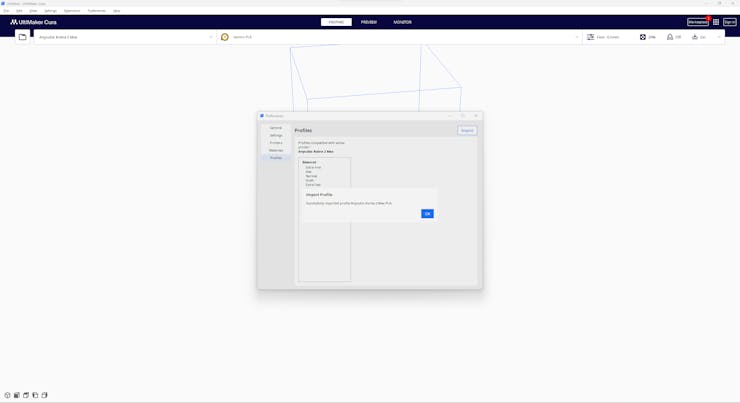

#️⃣ Since Anycubic Kobra 2 Max is not officially supported by Cura yet, we need to set it manually. Fortunately, Anycubic provides detailed configuration steps for Anycubic Kobra 2 Max on Cura.

#️⃣ First of all, create a custom printer profile on Cura for Anycubic Kobra 2 Max with given printer settings.

#️⃣ Then, import the printer profile (configuration) file provided by Anycubic, depending on the filament type.

Step 2.1: Assembling the 3D-printed case

After printing all parts (models), I attached the URM15 ultrasonic sensor into its special cylindrical slot on the end point of the L-shaped main case and fastened the remaining components to their corresponding slots within the main case via a hot glue gun.

Then, I fastened the Squid PCB to its unique PCB holder via the hot glue gun, encasing the PCB outline. After fastening the Squid PCB, I attached the hang-on aquarium connector to the back of the PCB holder via M3 screws and nuts.

Since the removable top cover has special semicircular-shaped mounting brackets, the DS18B20 waterproof temperature sensor can externally be attached to the top cover.

Finally, I affixed the top cover to the main case via its provided snap-fit joints.

Since the main case contains all cables required for the connections between the Squid PCB and sensors, the device provides a single-unit structure and operates without wiring redundancy.

Step 3: Setting up an aquarium with prolific breeders to simulate harsh fish farm conditions

As explained earlier, before working on data collection procedures, I needed to find a natural or artificial environment demonstrating the ebb and flow of underwater substrate toxicity and water quality fluctuations due to overpopulation and decaying detritus. Unfortunately, I could not find a suitable natural environment near my hometown due to endangered aquatic life, unrelenting habitat destruction, and disposal of chemical waste mostly caused by human-led activities. Since I did not have access to an aquaculture facility to observe underwater substrate toxicity because of commercial aquatic animal breeding or plant harvesting, I decided to set up an artificial aquatic environment simulating noxious air bubbles in the underwater substrate and potential water pollution risk. Instead of setting up a small artificial garden pond for the commercial breeding of profitable fish (mostly for food), I decided to utilize a medium-sized aquarium (10 gallons) to replicate fish farm (or pisciculture) conditions.

Since this aquarium setting let me inspect the abrupt changes in the lower underwater substrate, I was able to conduct precise experiments to collect aquatic ultrasonic scan data for air bubble identification with ultrasonic imaging and capture chemical water quality test result (color-coded) images for water pollution detection.

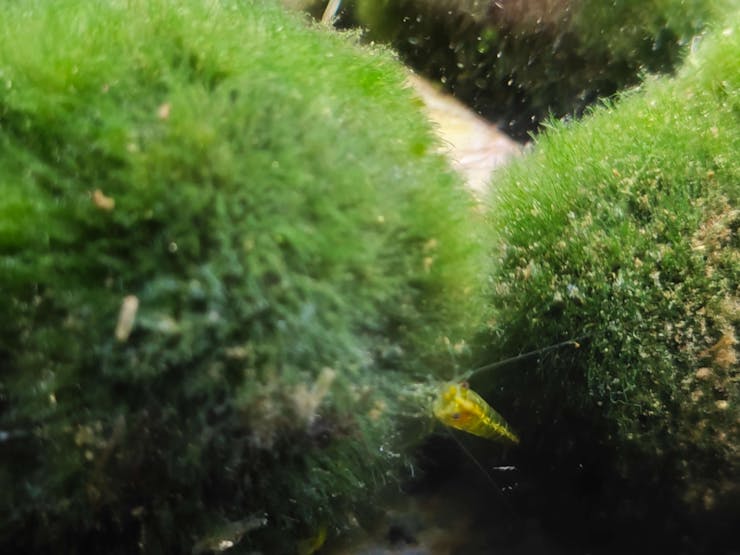

After conducting a painstaking analysis of prolific aquatic life with which I can observe commercial fish farm conditions affecting the lower underwater substrate with noxious air bubbles and exacerbating the decreasing water quality due to decaying detritus, I decided to set up a planted freshwater aquarium for harmonious and proliferating species that can thrive in a small freshwater aquarium — livebearers (guppies), Neocaridina shrimp, dwarf (or least) crayfish (Cambarellus Diminutus), etc.

To set up a self-sustaining aquarium manifesting harsh fish farm conditions, I added these aquatic species:

- 🐠 Mosaic Dumbo Ear Guppies

- 🐠 Snow White Guppies

- 🐠 Half Black Guppies

- 🐠 Green Snakeskin Cobra Guppies

- 🐠 Red Rose Guppies

- 🦐 Red Sakura Neocaridina Shrimps

- 🦐 Black Rose Neocaridina Shrimps

- 🦐 Vietnam Leopard Neocaridina Shrimps

- 🦐 Blue Angel Neocaridina Shrimps

- 🦐 Sakura Orange Neocaridina Shrimps

- 🦐 Red Rili Neocaridina Shrimps

- 🦐 Carbon Rili Neocaridina Shrimps

- 🦐 Green Jelly Neocaridina Shrimps

- 🦐 Yellow Fire Neon Neocaridina Shrimps

- 🦞 Cambarellus Diminutus — Dwarf (or least) Crayfish

- 🐌 Yellow Mystery (Apple) Snails

- 🐌 Blue Mystery (Apple) Snails

- 🐌 Black Poso Rabbit Snails

- 🐌 Bumblebee Horn (Nerite) Snails

- 🐌 Ramshorn Snails (removed due to overpopulation)

After deciding on the fecund aquatic species for my aquarium, I allowed them to spawn and breed for nearly five months and observed the changes in the aquarium due to overbreeding and decaying detritus.

After my submerged aquatic plants, floating plants (frogbit and duckweed), and emersed (root-submerged) pothos flourished, they filtered free ammonia, nitrates, and phosphates, diminished excess algae, and provided oxygen. Therefore, I was able to eliminate the accumulating contaminants caused by the regular feeding schedule in a small aquarium and focus on detecting the underwater air bubbles and assessing water pollution due to prolonged overbreeding and decaying detritus.

⚠️ Disclaimer: To simulate the abrupt water quality fluctuations of a commercial fish farm, I let my aquarium go overstock with guppy fry and shrimplets, which led to the accumulation of excess waste, occasional Ramshorn snail blooms, and sporadic algae blooms. Thus, to maintain the ideal experiment conditions for identifying noxious air bubbles lurking in the underwater substrate, I needed to do regular water changes, sometimes every four days. After completing my experiments, I safely transferred abundant guppies and shrimps to my local fish store.

Step 3.1: Observing the changing conditions due to overbreeding and decaying detritus

After concluding the device assembly, I hung the Squid PCB holder and the camera holder on the front side of the aquarium while collecting ultrasonic scan data buffers and capturing chemical water quality test result images. In this regard, I was able to place the high-quality USB webcam on the hang-on camera holder when standing idle and position the URM15 ultrasonic sensor precisely while scanning the underwater substrate to produce accurate ultrasonic images.

Since I designed a single-unit device structure, I did not encounter any issues while conducting extended experiments.

To increase the bottom surface area and observe abundant noxious air bubbles while collecting ultrasonic scan data, I added umpteen marimo moss balls covering the bottom of the tank. In this regard, I was able to provide plentiful underwater substrate gaps for incipient air bubbles to accumulate.

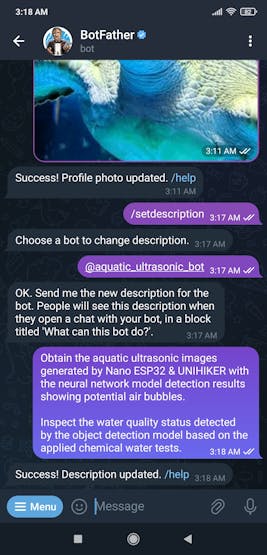

Step 4: Building a Telegram bot with BotFather to send push notifications

BotFather is an official Telegram bot that lets the user build and manage bots within the Telegram app without any coding or subscription required. I utilized BotFather to create a simple Telegram bot to inform the user via push notifications.

#️⃣ First of all, open BotFather on Telegram and enter /start to view the available command list and instructions.

#️⃣ Enter the /newbot command to create a new bot. Register the Telegram bot name when BotFather requests a name. It will be displayed in contact details and elsewhere.

Aquatic Ultrasonic Imaging and Water Testing

#️⃣ Then, register the bot username — tag. Usernames are 5-32 characters long and case insensitive but may only include Latin characters, numbers, and underscores. They must end in 'bot', e.g. 'tetris_bot' or 'TetrisBot'.

aquatic_ultrasonic_bot

#️⃣ After completing the steps above, BotFather generates an authorization token for the new Telegram bot. The authorization token is a string, such as 123456:ABC-DEF1234ghIkl-zyx57W2v1u123ew11, that is required to authorize the bot and send requests to the HTTP-based Telegram Bot API. Keep the generated token secure and store it safely.

#️⃣ To change the profile picture of the Telegram bot, enter the /setuserpic command and upload a picture.

#️⃣ Finally, to add a description to the Telegram bot to be displayed whenever the user initiates a new chat, enter the /setdescription command and register the text description.

Since I wanted to send push notifications via the HTTP-based Telegram Bot API from UNIHIKER but not retrieve information back, I did not need to establish an SSL connection to set a webhook for the Telegram Bot API.

Thanks to the official Telegram Bot API, I only needed to obtain the chat id parameter to be able to send push notifications with the secured Telegram bot authorization token.

To fetch the required chat id, I utilized the getUpdates method (HTTP GET request), which shows all incoming bot updates by using long polling and returns an array of Update objects.

#️⃣ Make an HTTP GET request by utilizing the secured Telegram bot authorization token:

https://api.telegram.org/bot<_token_>/getUpdates

#️⃣ Then, initiate a new chat and send a message to the given Telegram bot. After refreshing the page, it should display the Update object list, including the chat id:

- message ➡ chat ➡ id ➡ 6465514194

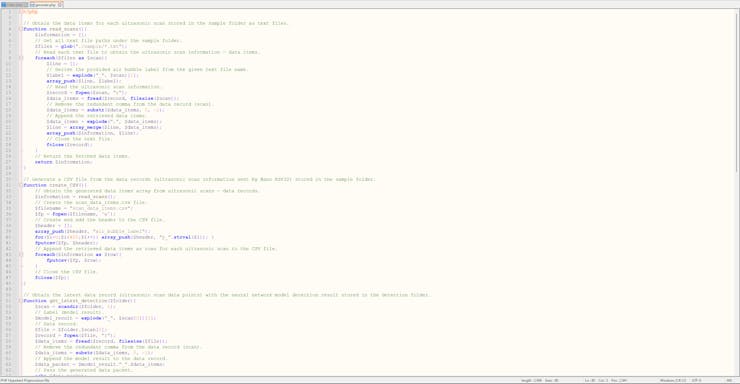

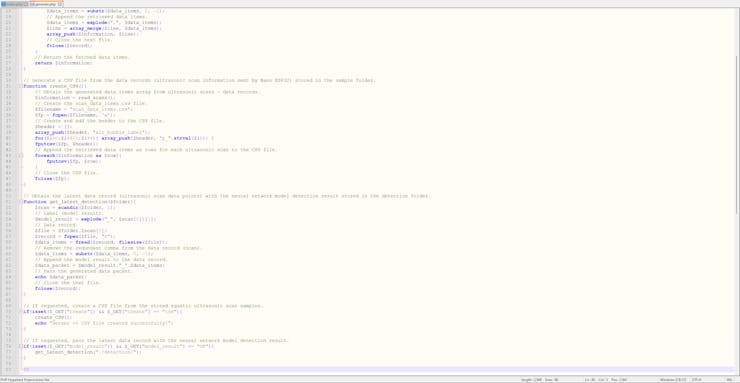

Step 5: Developing a web application to communicate w/ UNIHIKER and process requests from Nano ESP32

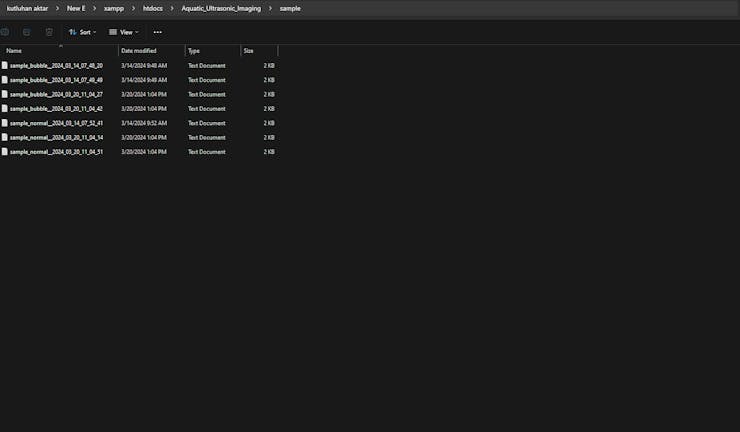

Since I needed to obtain the ultrasonic scan data buffers and the given air bubble class from Nano ESP32 so as to save the data records as text (TXT) files, I decided to develop a basic web application.

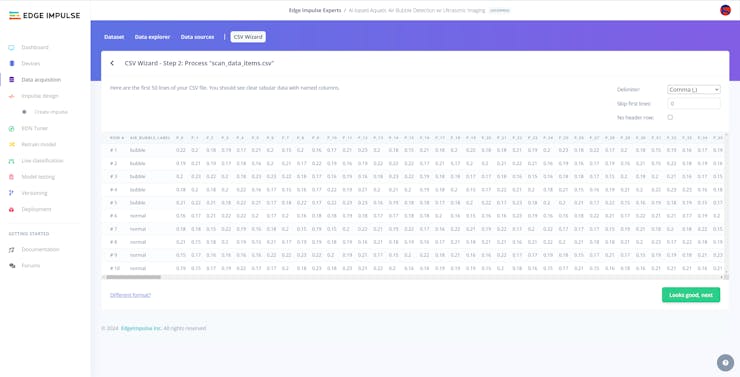

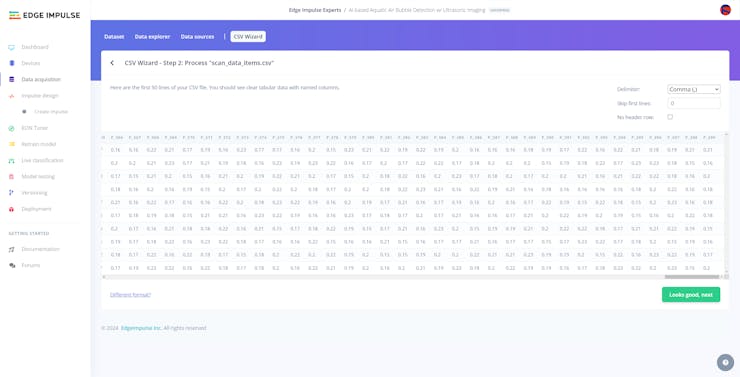

Also, the web application can generate a pre-formatted CSV file from the stored data records (text files) when requested via an HTTP GET request to construct a data set effortlessly.

In addition to the data collection features, similar to the ultrasonic scan samples, the web application can save model detection results transferred by Nano ESP32 via an HTTP POST request — buffer passed to the neural network model and the detected air bubble label — as text files in a separate folder.

As shown below, the web application consists of two folders and two code files:

- /detection

- /sample

- generate.php

- index.php

- scan_data_items.csv

📁 index.php

⭐ Obtain the current date and time.

⭐ Initiate the text file name for the received ultrasonic scan data buffer by adding the collection or prediction date.

$date = date("Y_m_d_H_i_s");

# Define the text file name of the received ultrasonic scan data.

$txt_file = "%s_%s__".$date;

$save_folder = "";⭐ If Nano ESP32 transfers the data type and the selected or detected air bubble class for the received ultrasonic scan buffer via GET query parameters, modify the text file name accordingly. Then, select the folder to save the generated text file — sample or detection.

if(isset($_GET["scan"]) && isset($_GET["type"]) && isset($_GET["class"])){

$txt_file = sprintf($txt_file, $_GET["type"], $_GET["class"]);

$save_folder = $_GET["type"];

}⭐ If Nano ESP32 transfers an ultrasonic scan data buffer via an HTTP POST request as a new sample or after running the neural network model, save the received buffer with the selected or detected air bubble class to the folder associated with the given data type as a TXT file — sample or detection.

if(!empty($_FILES["ultrasonic_scan"]['name'])){

// Text File:

$received_scan_properties = array(

"name" => $_FILES["ultrasonic_scan"]["name"],

"tmp_name" => $_FILES["ultrasonic_scan"]["tmp_name"],

"size" => $_FILES["ultrasonic_scan"]["size"],

"extension" => pathinfo($_FILES["ultrasonic_scan"]["name"], PATHINFO_EXTENSION)

);

// Check whether the uploaded file's extension is in the allowed file formats.

$allowed_formats = array('jpg', 'png', 'bmp', 'txt');

if(!in_array($received_scan_properties["extension"], $allowed_formats)){

echo 'FILE => File Format Not Allowed!';

}else{

// Check whether the uploaded file size exceeds the 5 MB data limit.

if($received_scan_properties["size"] > 5000000){

echo "FILE => File size cannot exceed 5MB!";

}else{

// Save the uploaded file (TXT).

move_uploaded_file($received_scan_properties["tmp_name"], "./".$save_folder."/".$txt_file.".".$received_scan_properties["extension"]);

echo "FILE => Saved Successfully!";

}

}

}📁 generate.php

⭐ In the read_scans function:

⭐ Get all text file paths under the sample folder via the built-in glob function.

⭐ Read each text file to obtain the saved ultrasonic scan data buffers.

⭐ Derive the selected air bubble class of the data record from the given text file name.

⭐ Then, remove the redundant comma from the end of the data record.

⭐ After decoding 400 comma-separated data points from the given data record, append the retrieved data items with the selected class as a child array to the information array (parent) by utilizing built-in array_merge and array_push functions.

⭐ Finally, return the modified parent array consisting of the fetched data items.

function read_scans(){

$information = [];

// Get all text file paths under the sample folder.

$files = glob("./sample/*.txt");

// Read each text file to obtain the ultrasonic scan information — data items.

foreach($files as $scan){

$line = [];

// Derive the provided air bubble label from the given text file name.

$label = explode("_", $scan)[1];

array_push($line, $label);

// Read the ultrasonic scan information.

$record = fopen($scan, "r");

$data_items = fread($record, filesize($scan));

// Remove the redundant comma from the data record (scan).

$data_items = substr($data_items, 0, -1);

// Append the retrieved data items.

$data_items = explode(",", $data_items);

$line = array_merge($line, $data_items);

array_push($information, $line);

// Close the text file.

fclose($record);

}

// Return the fetched data items.

return $information;

}⭐ In the create_CSV function:

⭐ Obtain the generated parent array, including data items and the assigned class for each stored ultrasonic scan data record — sample.

⭐ Create a new CSV file — scan_data_items.csv.

⭐ Define and add the header (class and data fields) to the created CSV file.

⭐ Append each child array (element) of the parent array as a new row to the CSV file.

⭐ Finally, close the generated CSV file.

function create_CSV(){

// Obtain the generated data items array from ultrasonic scans — data records.

$information = read_scans();

// Create the scan_data_items.csv file.

$filename = "scan_data_items.csv";

$fp = fopen($filename, 'w');

// Create and add the header to the CSV file.

$header = [];

array_push($header, "air_bubble_label");

for($i=0;$i<400;$i++){ array_push($header, "p_".strval($i)); }

fputcsv($fp, $header);

// Append the retrieved data items as rows for each ultrasonic scan to the CSV file.

foreach($information as $row){

fputcsv($fp, $row);

}

// Close the CSV file.

fclose($fp);

}⭐ In the get_latest_detection function:

⭐ Via the built-in scandir function, obtain the latest model detection result saved as a text file under the detection folder — ultrasonic scan buffer passed to the neural network model.

⭐ Derive the detected air bubble label from the given file name.

⭐ Remove the redundant comma from the end of the given buffer.

⭐ Add the detected label to the revised buffer.

⭐ Then, pass the generated data packet as a string.

function get_latest_detection($folder){

$scan = scandir($folder, 1);

// Label (model result).

$model_result = explode("_", $scan[0])[1];

// Data record.

$file = $folder.$scan[0];

$record = fopen($file, "r");

$data_items = fread($record, filesize($file));

// Remove the redundant comma from the data record (scan).

$data_items = substr($data_items, 0, -1);

// Append the model result to the data record.

$data_packet = $model_result."_".$data_items;

// Pass the generated data packet.

echo $data_packet;

// Close the text file.

fclose($record);

}⭐ If requested by the user via an HTTP GET request, create a pre-formatted CSV file from the stored aquatic ultrasonic scan samples (text files) — data records.

if(isset($_GET["create"]) && $_GET["create"] == "csv"){

create_CSV();

echo "Server => CSV file created successfully!";

}⭐ If requested by the user via an HTTP GET request, obtain the latest model detection result — ultrasonic scan buffer passed to the neural network model and the detected air bubble class — and return the generated data packet as a string.

if(isset($_GET["model_result"]) && $_GET["model_result"] == "OK"){

get_latest_detection("./detection/");

}

Step 5.1: Setting and running the web application on LattePanda 3 Delta

Since I wanted to build a feasible and accessible AIoT underwater air bubble and water pollution detection device not dependent on cloud or hosting services, I decided to host my web application on LattePanda 3 Delta 864. Therefore, I needed to set up a LAMP web server.

LattePanda 3 Delta is a pocket-sized hackable computer that provides ultra performance with the Intel 11th-generation Celeron N5105 processor.

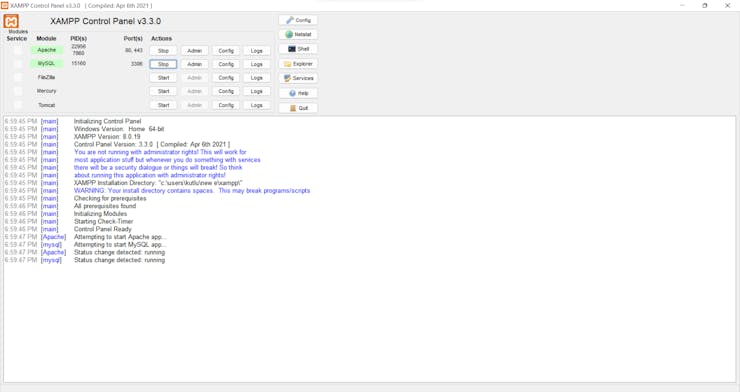

Plausibly, LattePanda 3 Delta can run the XAMPP application. So, it is effortless to create a server with a MariaDB database on LattePanda 3 Delta.

#️⃣ Install and set up the XAMPP development environment.

Step 6: Setting up Nano ESP32 on Arduino IDE

Since Nano ESP32 has the well-known Nano form and provides Wi-Fi connectivity via the u-blox® NORA-W106 (ESP32-S3) module, I decided to employ Nano ESP32 to transfer data packets directly to the web application, including the produced aquatic ultrasonic scan buffer, the selected air bubble class for samples, and the detected air bubble label after running the neural network model.

Nevertheless, before proceeding with the following steps, I needed to set Nano ESP32 on the Arduino IDE, install the required libraries, and configure some default settings.

#️⃣ To install the required core, navigate to Tools ➡ Board ➡ Boards Manager and search for Arduino ESP32 Boards.

#️⃣ After installing the core, navigate to Tools ➡ Board ➡ ESP32 Arduino (Arduino) and select Arduino Nano ESP32.

#️⃣ Download and inspect the required libraries for the URM15 - 75KHZ ultrasonic sensor, the 6-axis accelerometer, the DS18B20 waterproof temperature sensor, and the SSD1306 OLED display:

DFRobot_RTU | Download

DFRobot_WT61PC | Download

OneWire | Download

DallasTemperature | Download

Adafruit_SSD1306 | Download

Adafruit-GFX-Library | Download

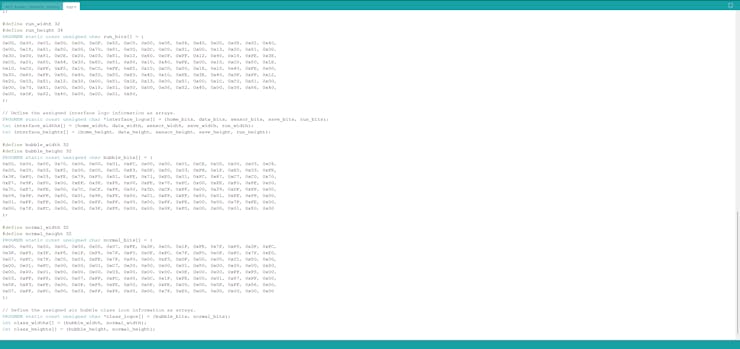

Step 6.1: Displaying images on the SSD1306 OLED screen

#️⃣ To be able to display images (icons) on the SSD1306 OLED screen, first convert image files (PNG or JPG) to monochromatic bitmaps. Then, convert the generated bitmaps to compatible C data arrays. I decided to utilize LCD Assistant to create C data arrays.

#️⃣ After installing LCD Assistant, upload a monochromatic bitmap and select Vertical or Horizontal, depending on the screen type.

#️⃣ Then, save all the converted C data arrays to the logo.h file.

⭐ In the logo.h file, I defined multi-dimensional arrays to group the assigned logos (interface and class) and their sizes — width and height.

// Define the assigned interface logo information as arrays.

PROGMEM static const unsigned char *interface_logos[] = {home_bits, data_bits, sensor_bits, save_bits, run_bits};

int interface_widths[] = {home_width, data_width, sensor_width, save_width, run_width};

int interface_heights[] = {home_height, data_height, sensor_height, save_height, run_height};

// Define the assigned air bubble class icon information as arrays.

PROGMEM static const unsigned char *class_logos[] = {bubble_bits, normal_bits};

int class_widths[] = {bubble_width, normal_width};

int class_heights[] = {bubble_height, normal_height};

...

display.drawBitmap(SCREEN_WIDTH-l_w, SCREEN_HEIGHT-l_h, interface_logos[menu_option], l_w, l_h, SSD1306_WHITE);

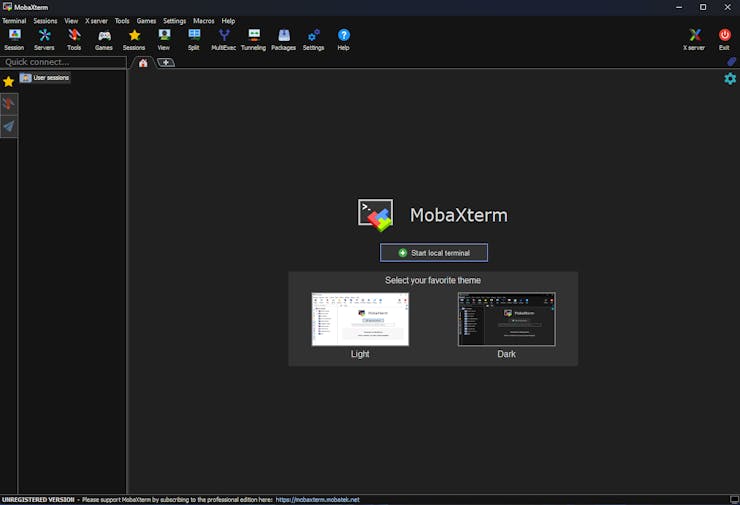

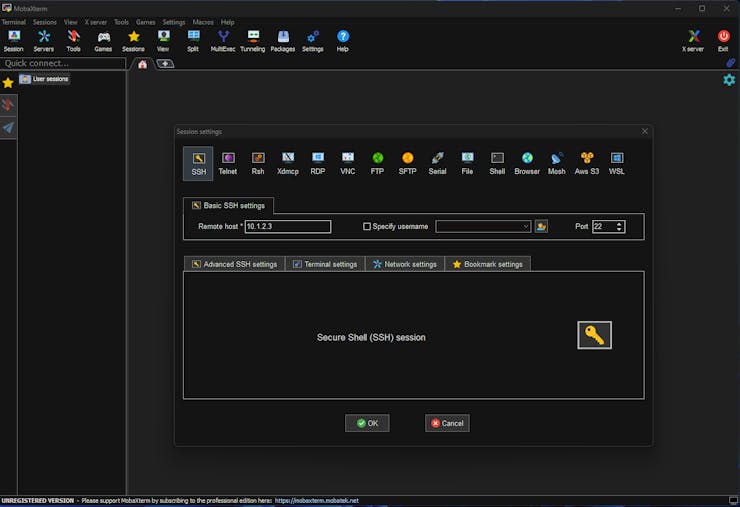

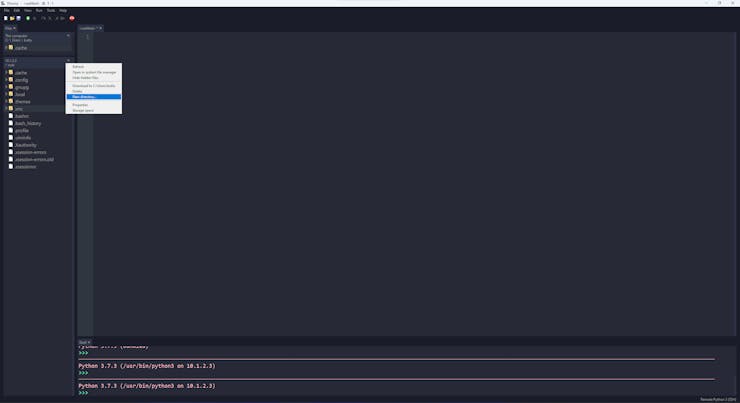

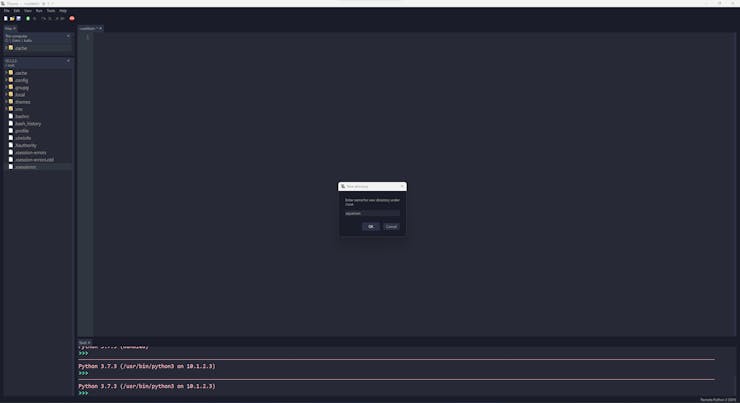

Step 7: Setting up UNIHIKER on MobaXterm & Thonny

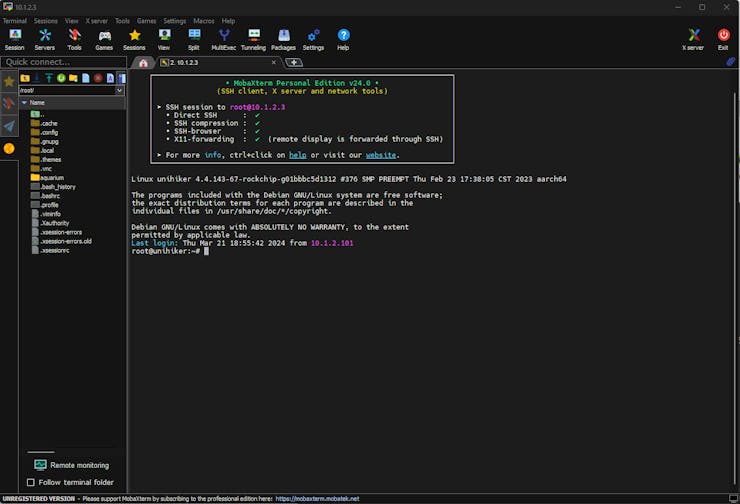

Although UNIHIKER is an outstandingly compact single-board computer providing a built-in touchscreen, integrated Python modules, and a microcontroller coprocessor, I still needed to install the required Python modules and set up the necessary software before proceeding with the following steps.

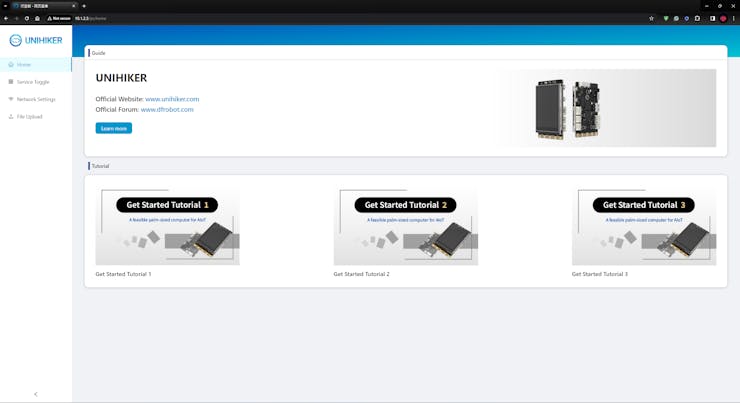

#️⃣ First of all, if you are a novice in programming with UNIHIKER, please visit the official tutorials and guidelines.

#️⃣ After connecting UNIHIKER to the computer via a USB Type-C cable, go to the home page of UNIHIKER's local web server via the default browser: 10.1.2.3.

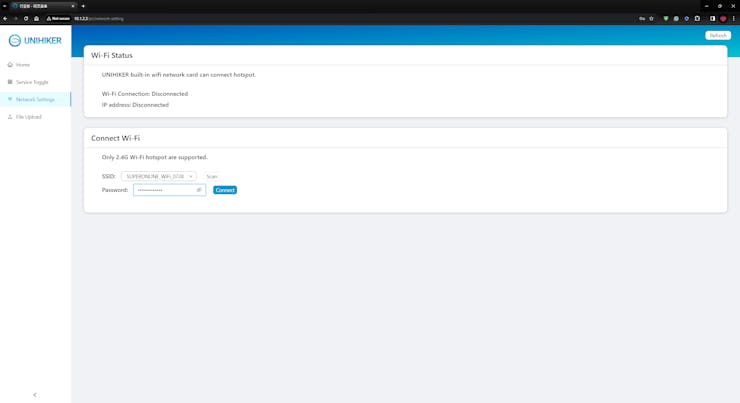

#️⃣ Then, navigate to Network Settings and establish the Wi-Fi connection.

#️⃣ Since it is necessary to utilize the terminal to install Python modules, but UNIHIKER does not allow the user to access the terminal via its onboard interface, I needed to connect to UNIHIKER remotely via SSH.

#️⃣ To set up the SSH connection to access the terminal, I decided to utilize MobaXterm due to its advanced terminal configuration options.

#️⃣ After installing MobaXterm, connect to the UNIHIKER remote host with the default root user credentials:

- Server (Host): 10.1.2.3

- Account (Username): root

- Password: dfrobot

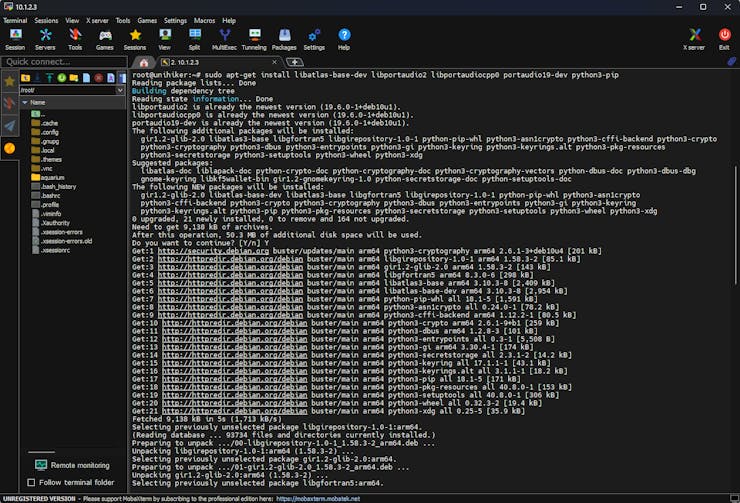

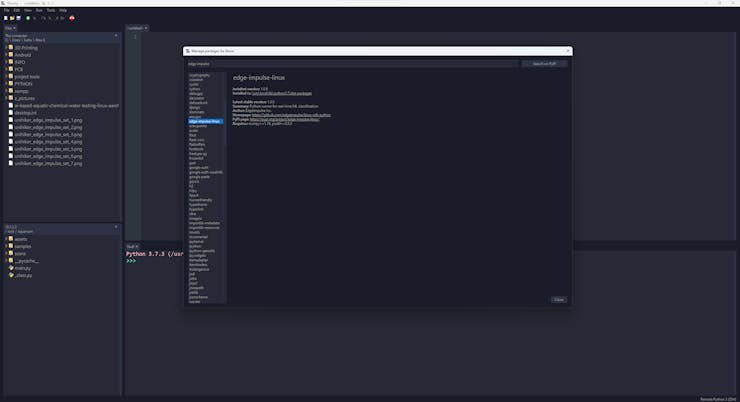

#️⃣ After establishing the SSH connection via MobaXterm, to run Edge Impulse object detection models on UNIHIKER, install the Edge Impulse Linux Python SDK by utilizing the terminal.

sudo apt-get install libatlas-base-dev libportaudio2 libportaudiocpp0 portaudio19-dev python3-pip

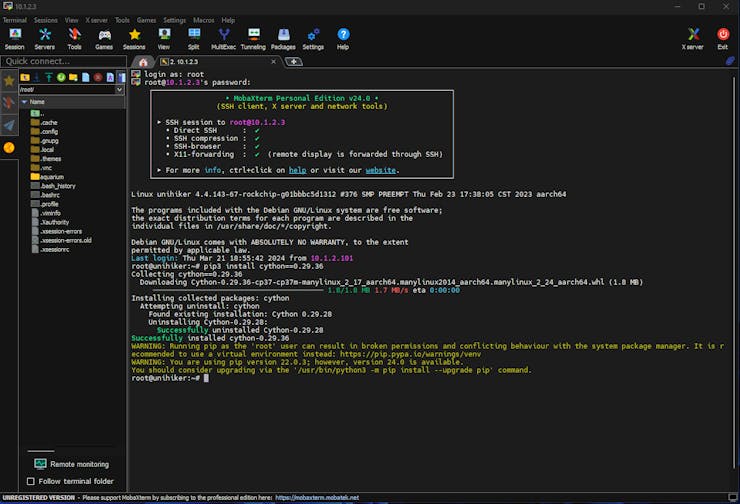

#️⃣ To be able to utilize the Linux Python SDK, the Cython module is required on UNIHIKER. However, the latest Cython version is not compatible with the SDK. According to my experiments, the Cython 0.29.36 version works without a problem.

pip3 install cython==0.29.36

#️⃣ After downloading the correct Cython version, continue installing the Linux Python SDK.

pip3 install pyaudio edge_impulse_linux

#️⃣ Since I employed the integrated Python modules to control the GPIO pins of the microcontroller coprocessor, design a feature-rich user interface (GUI — Tkinter application), and display the interactive user interface on the built-in touchscreen, I did not need to install any additional Python libraries via MobaXterm.

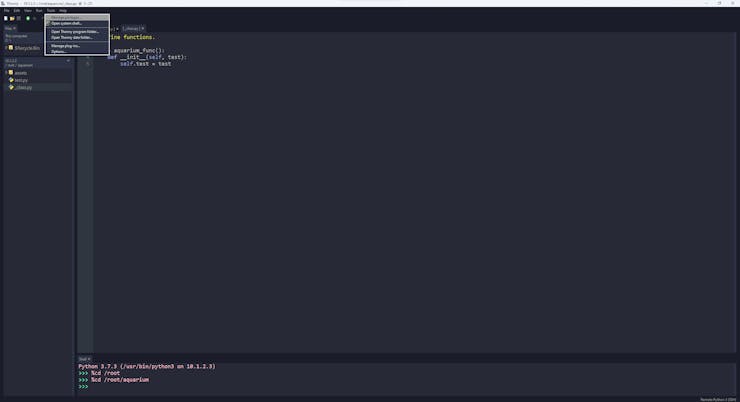

#️⃣ Although MobaXterm lets the user access the root folder and run Python scripts, I decided to utilize Thonny Python IDE to program my Python scripts due to its simple debugger.

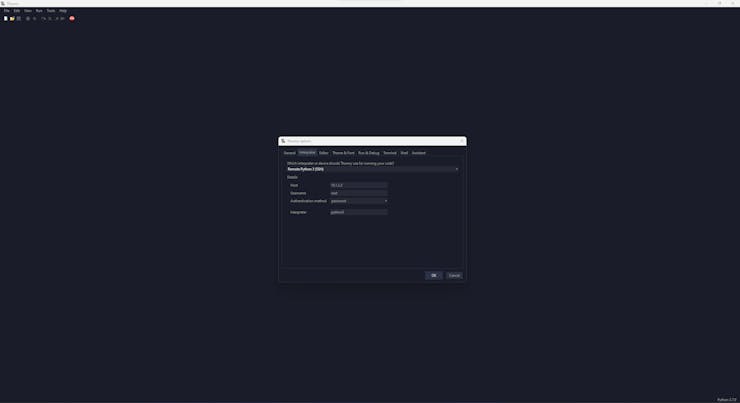

#️⃣ After installing the required modules via MobaXterm, open Thonny and connect UNIHIKER by applying the built-in Remote Python 3 (SSH) interpreter.

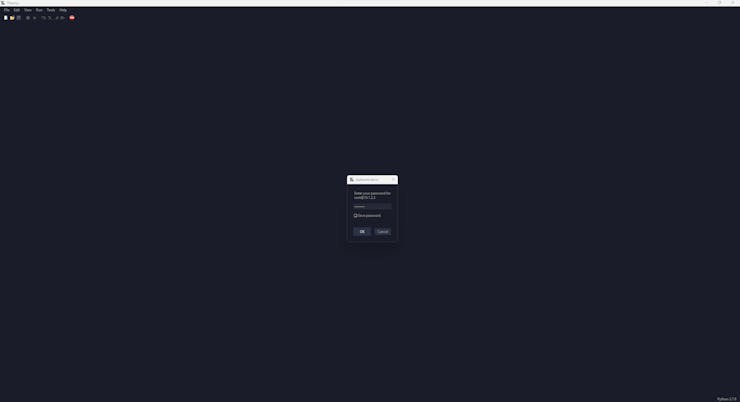

#️⃣ After changing the interpreter, use the default root user credentials to initiate the SSH connection on Thonny.

#️⃣ After establishing the SSH connection, Thonny lets the user access the root folder, create directories, upload files (assets), and run Python scripts.

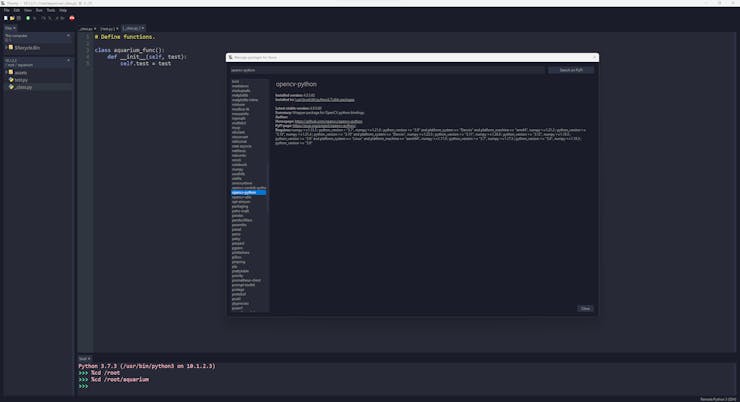

#️⃣ Although Thonny does not let the user install or update Python modules, to inspect the available (pre-installed) libraries, go to Tools ➡ Manage packages...

#️⃣ To run code files manually without establishing the SSH connection, press the onboard Home button on UNIHIKER, go to Run Programs, and select a code file.

Step 8: Collecting information produced by the ultrasonic sensor and the accelerometer

After setting Nano ESP32 on the Arduino IDE, I programmed Nano ESP32 to initiate an aquatic ultrasonic scan, generate an ultrasonic scan data buffer according to the movements detected by the accelerometer, and transfer the generated ultrasonic scan buffer to the web application via an HTTP POST request.

Since I wanted to provide a feature-rich user interface allowing the user to assign labels while collecting data samples, I decided to connect the SSD1306 OLED display and four control buttons to Nano ESP32. Via the user interface, I was able to assign air bubble classes empirically and send the generated ultrasonic scan buffer with the selected air bubble class (label) directly to the web application. As mentioned earlier, Nano ESP32 does not provide an onboard storage option. Thus, by transferring samples to the web application, I obviated the need for connecting external storage to Nano ESP32.

Since Nano ESP32 features three hardware serial (UART) ports, excluding the USB serial port, I was able to connect multiple sensors requiring serial communication without a data transmission conflict.

As explained in the previous steps, the web application sorts the transferred data packet to save ultrasonic scan samples as text files named according to the assigned classes.

This AI-powered underwater air bubble detection device comprises two separate development boards — Nano ESP32 and UNIHIKER — performing interconnected features for data collection and running advanced AI models. Thus, the described code snippets show the different aspects of the same code file. Please refer to the code files below to inspect all interconnected functions in detail.

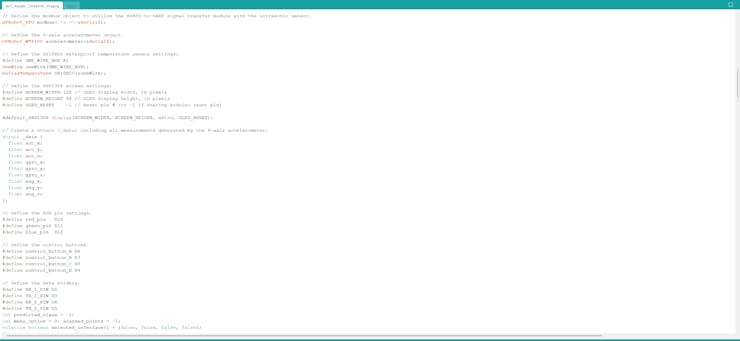

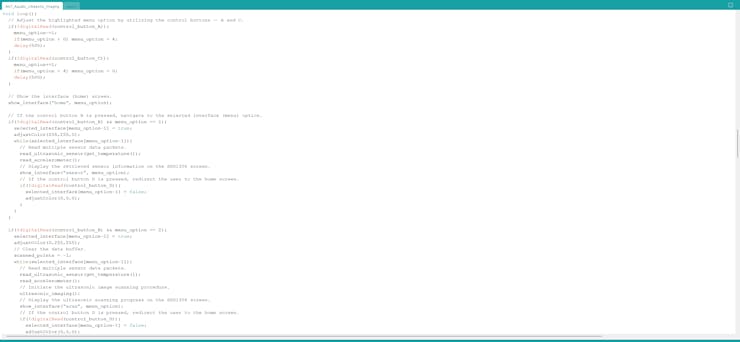

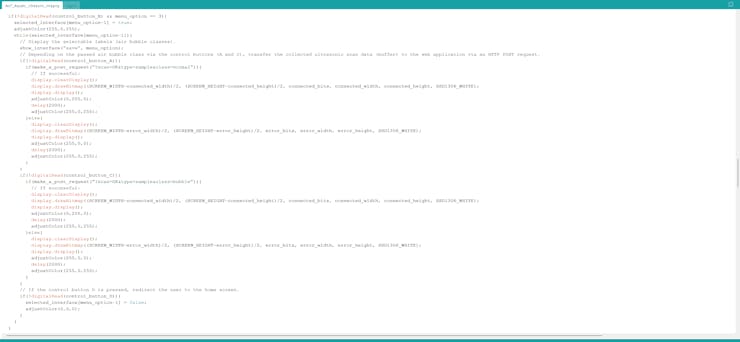

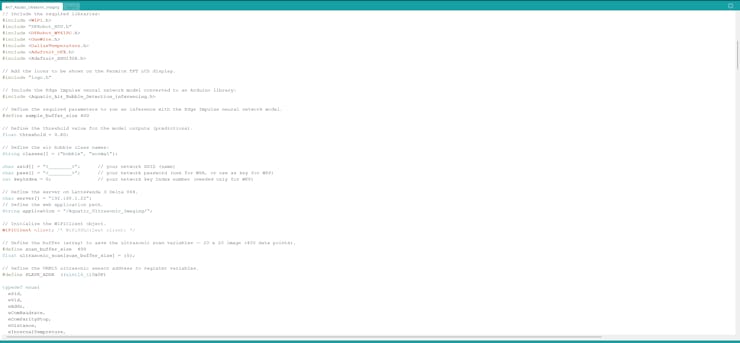

📁 AIoT_Aquatic_Ultrasonic_Imaging.ino

⭐ Include the required libraries.

#include <WiFi.h>

#include "DFRobot_RTU.h"

#include <DFRobot_WT61PC.h>

#include <OneWire.h>

#include <DallasTemperature.h>

#include <Adafruit_GFX.h>

#include <Adafruit_SSD1306.h>⭐ Add the interface icons and the assigned class logos (converted C arrays) to be shown on the SSD1306 OLED display — logo.h.

#include "logo.h"⭐ Define the required server configurations for the web application hosted on LattePanda 3 Delta 864.

⭐ Then, initialize the WiFiClient object.

char server[] = "192.168.1.22";

// Define the web application path.

String application = "/Aquatic_Ultrasonic_Imaging/";

// Initialize the WiFiClient object.

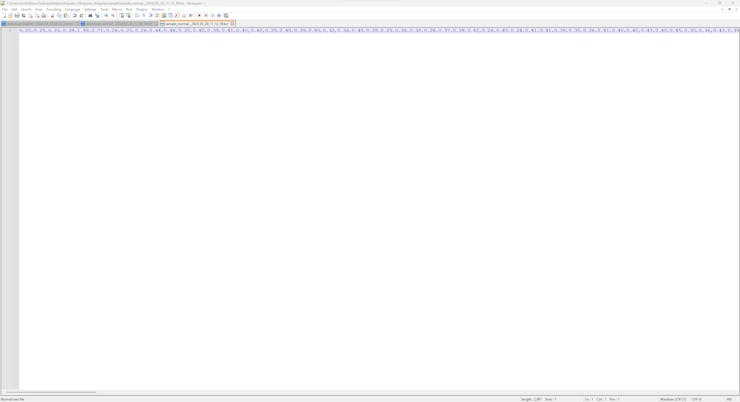

WiFiClient client; /* WiFiSSLClient client; */⭐ Define the buffer (array) and allocate the buffer size to save the ultrasonic scan data items — a 20 x 20 image (400 data points).

#define scan_buffer_size 400

float ultrasonic_scan[scan_buffer_size] = {0};⭐ Define the required configuration parameters and the address to register settings for the URM15 ultrasonic sensor.

#define SLAVE_ADDR ((uint16_t)0x0F)

typedef enum{

ePid,

eVid,

eAddr,

eComBaudrate,

eComParityStop,

eDistance,

eInternalTempreture,

eExternTempreture,

eControl

}eRegIndex_t;⭐ Define the modbus object and assign the hardware serial port (Serial1) to obtain the information generated by the ultrasonic sensor via the RS485-to-UART signal adapter module.

DFRobot_RTU modbus(/*s =*/&Serial1);⭐ Define the accelerometer object and assign the hardware serial port (Serial2) to obtain the information generated by the 6-axis accelerometer via serial communication.

DFRobot_WT61PC accelerometer(&Serial2);⭐ Define the required configuration settings for the DS18B20 waterproof temperature sensor.

#define ONE_WIRE_BUS A1

OneWire oneWire(ONE_WIRE_BUS);

DallasTemperature DS18B20(&oneWire);⭐ Configure the SSD1306 OLED display.

#define SCREEN_WIDTH 128 // OLED display width, in pixels

#define SCREEN_HEIGHT 64 // OLED display height, in pixels

#define OLED_RESET -1 // Reset pin # (or -1 if sharing Arduino reset pin)

Adafruit_SSD1306 display(SCREEN_WIDTH, SCREEN_HEIGHT, &Wire, OLED_RESET);⭐ Create a struct (_data) to list and access the information generated by the 6-axis accelerometer easily.

struct _data {

float acc_x;

float acc_y;

float acc_z;

float gyro_x;

float gyro_y;

float gyro_z;

float ang_x;

float ang_y;

float ang_z;

};⭐ Initialize the first hardware serial port (Serial1) to communicate with the URM15 ultrasonic sensor via the RS485-to-UART signal adapter module.

⭐ Initialize the second hardware serial port (Serial2) to communicate with the 6-axis accelerometer.

Serial1.begin(19200, SERIAL_8N1, RX_1_PIN, TX_1_PIN);

Serial2.begin(9600, SERIAL_8N1, RX_2_PIN, TX_2_PIN);⭐ Set the URM15 ultrasonic sensor to trigger mode, select the external temperature compensation, and enable the temperature compensation function by overwriting the control register variable — byte (LSB).

/*

bit0:

0 - select onboard temperature

1 - select external temperature

bit1:

0 - enable temperature compensation function

1 - disable temperature compensation function

bit2:

0 - activate auto detection

1 - activate passive detection

bit3:

1 - read distance every 65 ms (in passive detection mode)

*/

modbus.writeHoldingRegister(/*id =*/SLAVE_ADDR, /*reg =*/ eControl, /*val =*/0b00000001);⭐ Initiate the 6-axis accelerometer and configure its data output frequency.

accelerometer.modifyFrequency(FREQUENCY_200HZ); /* FREQUENCY_0_1HZ, FREQUENCY_0_5HZ, FREQUENCY_1HZ, FREQUENCY_2HZ, FREQUENCY_5HZ, FREQUENCY_10HZ, FREQUENCY_20HZ, FREQUENCY_50HZ, FREQUENCY_100HZ, FREQUENCY_125HZ, FREQUENCY_200HZ */⭐ Initialize the DS18B20 temperature sensor.

DS18B20.begin();⭐ Attempt to connect to the given Wi-Fi network and wait for the successful network connection.

WiFi.mode(WIFI_STA);

WiFi.begin(ssid, pass);

// Attempt to connect to the given Wi-Fi network.

while(WiFi.status() != WL_CONNECTED){

// Wait for the network connection.

delay(500);

Serial.print(".");

}

// If connected to the network successfully:

Serial.println("Connected to the Wi-Fi network successfully!");⭐ In the make_a_post_request function:

⭐ Connect to the web application named Aquatic_Ultrasonic_Imaging.

⭐ Create the query string by adding the given URL query (GET) parameters, including buffer data type, the selected class, and the detected label.

⭐ Define the boundary parameter named UltrasonicScan so as to send the generated ultrasonic scan data buffer (400 points) as a text (TXT) file to the web application.

⭐ Get the total content (data packet) length.

⭐ Make an HTTP POST request with the created query string to the web application in order to transfer the generated ultrasonic scan data buffer as a TXT file with the selected class or the label detected by the neural network model.

⭐ Wait until transferring the ultrasonic scan (text) buffer.

boolean make_a_post_request(String request){

// Connect to the web application named Aquatic_Ultrasonic_Imaging. Change '80' with '443' if you are using SSL connection.

if (client.connect(server, 80)){

// If successful:

Serial.println("\nConnected to the web application successfully!\n");

// Create the query string:

String query = application + request;

// Make an HTTP POST request:

String head = "--UltrasonicScan\r\nContent-Disposition: form-data; name=\"ultrasonic_scan\"; filename=\"new_scan.txt\"\r\nContent-Type: text/plain\r\n\r\n";

String tail = "\r\n--UltrasonicScan--\r\n";

// Get the total message length.

uint32_t totalLen = head.length() + sizeof(ultrasonic_scan) + (scan_buffer_size*sizeof(char)) + tail.length();

// Start the request:

client.println("POST " + query + " HTTP/1.1");

client.println("Host: 192.168.1.22");

client.println("Content-Length: " + String(totalLen));

client.println("Connection: Keep-Alive");

client.println("Content-Type: multipart/form-data; boundary=UltrasonicScan");

client.println();

client.print(head);

for(int i=0; i<scan_buffer_size; i++){ client.print(ultrasonic_scan[i]); client.print(",");}

client.print(tail);

// Wait until transferring the ultrasonic scan (text) buffer (20x20).

delay(2000);

// If successful:

Serial.println("HTTP POST => Data transfer completed!\n");

return true;

}else{

Serial.println("\nConnection failed to the web application!\n");

delay(2000);

return false;

}

}⭐ In the read_ultrasonic_sensor function:

⭐ Configure the external temperature value by utilizing the evaluated water temperature to generate precise distance measurements.

⭐ Obtain the temperature-compensated distance measurement produced by the URM15 ultrasonic sensor, except if the sensor is out of range.

void read_ultrasonic_sensor(float water_temp){

// Configure the external temperature value by utilizing the evaluated water temperature to generate precise distance measurements.

water_temp = water_temp*10;

modbus.writeHoldingRegister(/*id =*/SLAVE_ADDR, /*reg =*/eExternTempreture, /*val =*/water_temp);

delay(50);

// Obtain the temperature-compensated distance measurement produced by the URM15 ultrasonic sensor.

distance = modbus.readHoldingRegister(SLAVE_ADDR, eDistance);

delay(50);

// If the sensor is out of range, set the distance to -1.

if(distance == 65535){

distance = -1;

Serial.println("Ultrasonic sensor is out of range!");

}else{

distance = distance/10;

}

delay(50);

}⭐ In the read_accelerometer function, obtain the X, Y, and Z-axis movement variables generated by the 6-axis accelerometer — acceleration, angular velocity, and angle.

void read_accelerometer(){

// Obtain the X, Y, and Z-axis measurements generated by the 6-axis accelerometer — acceleration, angular velocity, angle.

if(accelerometer.available()){

_acc.acc_x = accelerometer.Acc.X; _acc.acc_y = accelerometer.Acc.Y; _acc.acc_z = accelerometer.Acc.Z;

_acc.gyro_x = accelerometer.Gyro.X; _acc.gyro_y = accelerometer.Gyro.Y; _acc.gyro_z = accelerometer.Gyro.Z;

_acc.ang_x = accelerometer.Angle.X; _acc.ang_y = accelerometer.Angle.Y; _acc.ang_z = accelerometer.Angle.Z;

}

}⭐ In the get_temperature function, obtain the water temperature in Celsius, estimated by the DS18B20 waterproof temperature sensor.

float get_temperature(){

// Obtain the temperature measurement in Celsius, estimated by the DS18B20 temperature sensor.

DS18B20.requestTemperatures();

float t = DS18B20.getTempCByIndex(0);

delay(50);

return t;

}⭐ In the ultrasonic_imaging function:

⭐ Detect real-time device motions by reviewing the movement variables (X-axis and Y-axis) generated by the 6-axis accelerometer — acceleration and angular velocity.

⭐ If the device is gradually moving underwater within an arbitrary square, collect the temperature-compensated distance measurements produced by the URM15 ultrasonic sensor and save them as data points until completing the ultrasonic scan data buffer — 20 x 20 (400 points).

void ultrasonic_imaging(){

// Define underwater device movements by utilizing the axis measurements generated by the 6-axis accelerometer — acceleration and angular velocity.

if(_acc.acc_x > 0 && _acc.gyro_x > 0 && _acc.acc_y > 0 && _acc.gyro_y > 0){

// If the device is moving underwater inside an arbitrary square, collect the temperature-compensated distance measurements produced by the URM15 ultrasonic sensor

// and save them as data points to the scan data buffer — 20 x 20 (400 points).

if(scanned_points < 399){

scanned_points+=1;

ultrasonic_scan[scanned_points] = distance/100;

delay(50);

}else{

adjustColor(0,255,0);

Serial.println("Scan Completed!");

delay(50);

}

}

}⭐ Change the highlighted menu option by operating the onboard control buttons — A and C.

⭐ Show the selected (highlighted) menu option with its assigned interface icon on the SSD1306 OLED display.

if(!digitalRead(control_button_A)){

menu_option-=1;

if(menu_option < 0) menu_option = 4;

delay(500);

}

if(!digitalRead(control_button_C)){

menu_option+=1;

if(menu_option > 4) menu_option = 0;

delay(500);

}

// Show the interface (home) screen.

show_interface("home", menu_option);⭐ After selecting a menu option, if the control button B is pressed, navigate to the highlighted interface (menu) option.

⭐ If the first option (Show Readings) is activated:

⭐ Obtain the information produced by the ultrasonic sensor and the accelerometer.

⭐ Then, display the assigned interface logo and the retrieved sensor information on the SSD1306 screen for debugging.

⭐ If the control button D is pressed, redirect the user to the home screen.

if(!digitalRead(control_button_B) && menu_option == 1){

selected_interface[menu_option-1] = true;

adjustColor(255,255,0);

while(selected_interface[menu_option-1]){

// Read multiple sensor data packets.

read_ultrasonic_sensor(get_temperature());

read_accelerometer();

// Display the retrieved sensor information on the SSD1306 screen.

show_interface("sensor", menu_option);

// If the control button D is pressed, redirect the user to the home screen.

if(!digitalRead(control_button_D)){

selected_interface[menu_option-1] = false;

adjustColor(0,0,0);

}

}

}⭐ If the second option (Ultrasonic+++) is activated:

⭐ Obtain the information produced by the ultrasonic sensor and the accelerometer.

⭐ Initiate the ultrasonic image scanning procedure and save data points until completing the scan buffer — 20 x 20 (400 points).

⭐ Display the ultrasonic scan progress (collected points) on the SSD1306 screen.

⭐ If the control button D is pressed, redirect the user to the home screen.

if(!digitalRead(control_button_B) && menu_option == 2){

selected_interface[menu_option-1] = true;

adjustColor(0,255,255);

// Clear the data buffer.

scanned_points = -1;

while(selected_interface[menu_option-1]){

// Read multiple sensor data packets.

read_ultrasonic_sensor(get_temperature());

read_accelerometer();

// Initiate the ultrasonic image scanning procedure.

ultrasonic_imaging();

// Display the ultrasonic scanning progress on the SSD1306 screen.

show_interface("scan", menu_option);

// If the control button D is pressed, redirect the user to the home screen.

if(!digitalRead(control_button_D)){

selected_interface[menu_option-1] = false;

adjustColor(0,0,0);

}

}

}⭐ If the third option (Save Samples) is activated:

⭐ Display the selectable labels (air bubble classes) with their associated buttons.

⭐ Via the onboard control buttons (A and C), assign an air bubble class (normal or bubble) to the produced ultrasonic scan data buffer.

⭐ With the passed label, transfer the data type (sample or detection) and the given ultrasonic scan data buffer by making an HTTP POST request to the web application.

⭐ According to the data transmission success, notify the user by showing the associated connection icon on the screen.

⭐ If the control button D is pressed, redirect the user to the home screen.

if(!digitalRead(control_button_B) && menu_option == 3){

selected_interface[menu_option-1] = true;

adjustColor(255,0,255);

while(selected_interface[menu_option-1]){

// Display the retrieved sensor information on the SSD1306 screen.

show_interface("save", menu_option);

// Depending on the passed air bubble class via the control buttons (A and C), transfer the collected ultrasonic scan data (buffer) to the web application via an HTTP POST request.

if(!digitalRead(control_button_A)){

if(make_a_post_request("?scan=OK&type=sample&class=normal")){

// If successful:

display.clearDisplay();

display.drawBitmap((SCREEN_WIDTH-connected_width)/2, (SCREEN_HEIGHT-connected_height)/2, connected_bits, connected_width, connected_height, SSD1306_WHITE);

display.display();

adjustColor(0,255,0);

delay(2000);

adjustColor(255,0,255);

}else{

display.clearDisplay();

display.drawBitmap((SCREEN_WIDTH-error_width)/2, (SCREEN_HEIGHT-error_height)/2, error_bits, error_width, error_height, SSD1306_WHITE);

display.display();

adjustColor(255,0,0);

delay(2000);

adjustColor(255,0,255);

}

}

if(!digitalRead(control_button_C)){

if(make_a_post_request("?scan=OK&type=sample&class=bubble")){

// If successful:

display.clearDisplay();

display.drawBitmap((SCREEN_WIDTH-connected_width)/2, (SCREEN_HEIGHT-connected_height)/2, connected_bits, connected_width, connected_height, SSD1306_WHITE);

display.display();

adjustColor(0,255,0);

delay(2000);

adjustColor(255,0,255);

}else{

display.clearDisplay();

display.drawBitmap((SCREEN_WIDTH-error_width)/2, (SCREEN_HEIGHT-error_height)/2, error_bits, error_width, error_height, SSD1306_WHITE);

display.display();

adjustColor(255,0,0);

delay(2000);

adjustColor(255,0,255);

}

}

// If the control button D is pressed, redirect the user to the home screen.

if(!digitalRead(control_button_D)){

selected_interface[menu_option-1] = false;

adjustColor(0,0,0);

}

}

}

Step 8.1: Generating aquatic ultrasonic scans manifesting air bubbles and saving samples via the web application

#️⃣ As explained earlier, I placed a lot of marimo moss balls at the bottom of the tank to increase the bottom surface area, provide underwater substrate gaps, and observe abundant noxious air bubbles while collecting ultrasonic scan data.

#️⃣ Thus, I managed to construct a valid data set for the neural network model.

🐠📡💧📊 If Nano ESP32 connects to the Wi-Fi network successfully, the device shows the home screen with the menu (interface) options on the SSD1306 screen.

- 1. Show Readings

- 2. Ultrasonic+++

- 3. Save Samples

- 4. Run Inference

🐠📡💧📊 The device lets the user change the highlighted menu option by pressing the control buttons — A (↓) and C (↑).

🐠📡💧📊 While the user adjusts the highlighted menu option, the device displays the associated interface icon on the screen.

🐠📡💧📊 After highlighting a menu option, if the control button B is pressed, the device navigates to the selected option.

🐠📡💧📊 After activating a menu option, the device returns to the home screen if the user presses the control button D.

🐠📡💧📊 If the user activates the first menu option — Show Readings:

🐠📡💧📊 The device displays the information produced by the ultrasonic sensor and the accelerometer on the SSD1306 screen for debugging.

🐠📡💧📊 Then, the device turns the RGB LED (connected to Nano ESP32) to yellow.

🐠📡💧📊 If the user activates the second menu option — Ultrasonic+++:

🐠📡💧📊 The device turns the RGB LED to cyan.

🐠📡💧📊 The device detects real-time motions while the ultrasonic sensor is submerged by reviewing the movement variables produced by the 6-axis accelerometer — acceleration and angular velocity.

🐠📡💧📊 If the device is gradually moving underwater within an arbitrary square, Nano ESP32 collects the temperature-compensated distance measurements produced by the ultrasonic sensor and save them as data points until concluding the ultrasonic scan buffer — 20 x 20 (400 points).

🐠📡💧📊 After initiating the ultrasonic image scanning procedure, the device shows the scan progress (collected points) on the SSD1306 screen.

🐠📡💧📊 When Nano ESP32 completes collecting 400 data points of the scan buffer, the device notifies the user via the screen and turns the RGB LED to green.

🐠📡💧📊 If the user activates the third menu option — Save Samples:

🐠📡💧📊 The device turns the RGB LED to magenta and displays the selectable labels (air bubble classes) with their associated buttons.

- A) Class => normal

- C) Class => bubble

🐠📡💧📊 Via the onboard control buttons (A and C), the device lets the user assign an air bubble class (normal or bubble) to the generated ultrasonic scan data buffer empirically.

🐠📡💧📊 After pressing a control button (A or C), the device transfers the passed label and the generated ultrasonic scan data buffer to the web application via an HTTP POST request.

🐠📡💧📊 If Nano ESP32 transfers the given data packet successfully to the web application, the device notifies the user by showing the assigned connection icon on the screen and turning the RGB LED to green.

🐠📡💧📊 After receiving the ultrasonic scan buffer, the web application saves the buffer as a text (TXT) file (data record) to the sample folder by adding the passed label and the collection date to the file name.

- sample_normal__2024_03_14_07_52_41.txt

- sample_bubble__2024_04_03_16_53_08.txt

Step 9: Applying chemical water quality tests to analyze water contamination

Since all underwater air bubble activity cannot be singled out as an imminent toxic pollution risk, I decided to enable this air bubble detection device with the ability to assess potential water pollution based on chemical water quality tests.

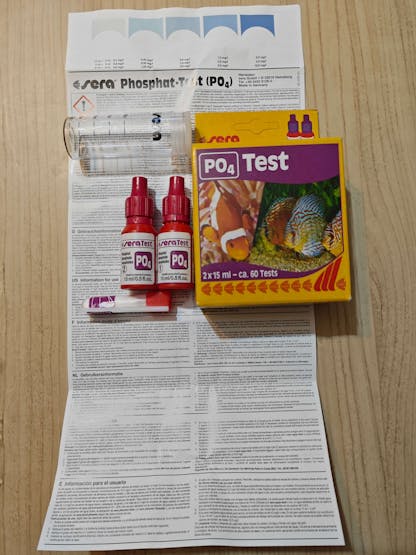

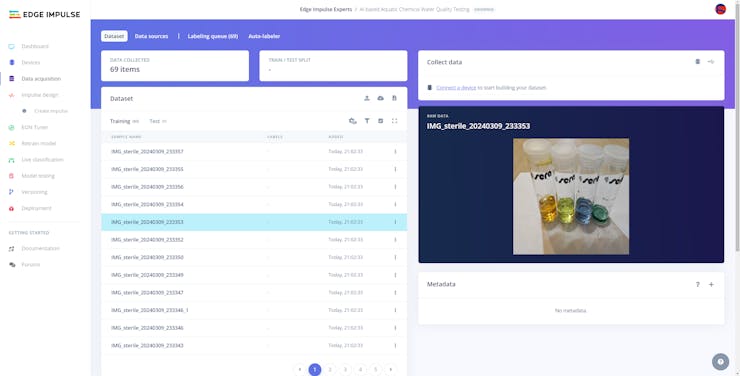

Even though there are various water quality tests for fish tanks, I decided to utilize color-coded chemical tests produced by the renowned full-range supplier for aquariums, ponds, and terrariums — sera. In this regard, I was able to make the object detection model determine the water pollution levels easily by the color discrepancies of the applied water quality tests.

After researching the most common indicators of water pollution in a retail fish farm, in this case, my overpopulated medium-sized aquarium simulating harsh fish farm conditions, I decided to apply these four water quality tests regularly:

After following the provided instructions thoroughly for each chemical test and observing the water quality levels (color codes) from a new water change state to the peak of the underwater air bubble activity, I managed to group water pollution levels into three categories:

- sterile

- dangerous

- polluted

Step 9.1: Capturing water quality test result images w/ the USB webcam

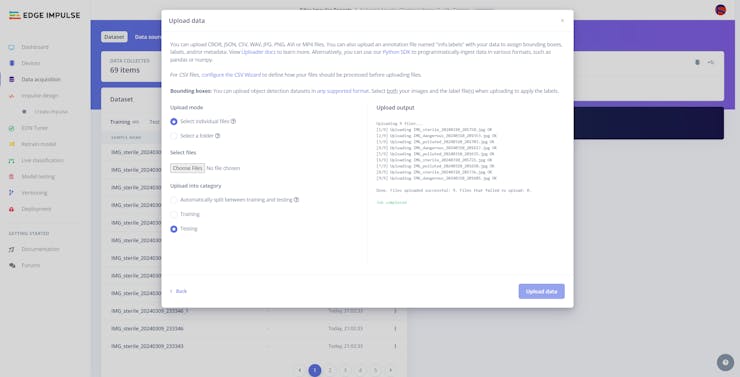

After setting up the necessary software on UNIHIKER via SSH and installing the required modules, I programmed UNIHIKER to capture the water quality test result images with the USB webcam and save them as samples.

Since I wanted to provide a feature-rich user interface to capture water quality test result image samples, assign labels, and access the interconnected features, I decided to program an interactive user interface (GUI — Tkinter application) with the integrated Python modules. Since UNIHIKER provides an onboard touchscreen and two control buttons, I did not need to connect additional components to display the user interface. Via the micro:bit-compatible edge connector on the Squid PCB, I added a secondary RGB LED to inform the user of the device status while performing operations related to UNIHIKER.

As explained earlier, I managed to group water pollution levels into three categories. Thus, I added the corresponding pollution levels as labels to the file names of each sample while capturing images to create a valid data set for the object detection model.

This AI-powered underwater air bubble detection device, assessing water pollution based on chemical tests, comprises two separate development boards — UNIHIKER and Nano ESP32 — performing interconnected features for data collection and running advanced AI models. Thus, the described code snippets show the different aspects of the same code file. Please refer to the code files below to inspect all interconnected functions in detail.

📁 _class.py

To bundle all functions under a specific structure, I created a class named aquarium_func. In the following steps, I will clarify the remaining functions of this class. Please refer to the _class.py file to inspect all interconnected functions.

⭐ In the display_camera_feed function:

⭐ Obtain the real-time video stream (frames) generated by the high-quality USB webcam.

⭐ Resize the latest captured camera frame depending on the provided image sample sizes of the Edge Impulse object detection model.

⭐ Then, resize the same frame to display a snapshot of the latest captured camera frame on the onboard touchscreen.

⭐ Stop the real-time camera feed if requested.

def display_camera_feed(self):

# Display the real-time video stream generated by the USB webcam.

ret, img = self.camera.read()

# Resize the captured frame depending on the given object detection model.

self.latest_frame_m = cv2.resize(img, self.frame_size_m)

# Resize the same frame to display it on the UNIHIKER screen (snapshot).

self.latest_frame_s = cv2.resize(img, self.frame_size_s)

# Stop the camera feed if requested.

if cv2.waitKey(1) & 0xFF == ord('q'):

self.camera.release()

cv2.destroyAllWindows()

print("\nCamera Feed Stopped!")⭐ In the take_snapshot function:

⭐ Save the latest snapshot frame to a temporary image file — snapshot.jpg — since the built-in Python module for Tkinter-based GUI does not support images as numpy arrays.

⭐ Then, show the snapshot image saved in the assets folder on the onboard touchscreen in order to notify the user of the latest captured camera frame.

⭐ Finally, store the latest image (depicted via the snapshot) resized according to the given model's frame sizes as the latest sample for further usage.

def take_snapshot(self, filename="assets/snapshot.jpg"):

# Show the latest camera frame (snapshot) on UNIHIKER to inform the user.

cv2.imwrite("./"+filename, self.latest_frame_s)

self.cam_snapshot_img.config(image=filename)

# Store the latest modified image sample on the memory.

self.modified_image = self.latest_frame_m⭐ In the save_img_sample function:

⭐ If the user selects a pollution class via the built-in control button B (on UNIHIKER), create the file name of the image sample by adding the selected class and the collection date.

⭐ Then, save the latest stored frame to the samples folder via the built-in OpenCV functions and notify the user via the user interface (GUI).

def save_img_sample(self, given_class):

if(given_class > -1):

# Create the file name for the image sample.

date = datetime.datetime.now().strftime("%Y%m%d_%H%M%S")

filename = "IMG_{}_{}.jpg".format(self.class_names[given_class], date)

# Save the modified image sample.

cv2.imwrite("./samples/"+filename, self.modified_image)

print("\nSample Saved Successfully: ./samples/" + filename)

# Notify the user.

self.cam_info_text.config(text="Saved: "+filename)

else:

self.cam_info_text.config(text="Please select a class.")#️⃣ Since UNIHIKER provides a built-in Python module tailored for displaying a Tkinter-based GUI on its onboard touchscreen (240 x 320), I was able to program the interactive user interface effortlessly.

#️⃣ Although the built-in module supports limited Tkinter features, I managed to create a multi-window user interface by shifting groups of GUI elements on and off-screen.

#️⃣ The interactive user interface (GUI) consists of three separate windows (layers):

- Home

- Aquatic Ultrasonic Scan

- Water Quality Test

⭐ In the create_user_interface function:

⭐ Design the feature-rich user interface via the provided unihiker module.

⭐ Group the generated GUI elements and their screen coordinates into separate arrays for each interface section (layer) so as to navigate windows effortlessly.

⭐ To add callback functions to the GUI elements, utilize the onclick parameter (triggered when the element is clicked) and the lambda expression.

def create_user_interface(self, _x=120, _y=10, offset=15, origin="top_left"):

# Design the user interface (GUI) via the built-in unihiker module.

# Camera interface for AI-based chemical water quality test.

self.cam_backg = self.interface.fill_rect(x=0, y=0, w=240, h=320, color="#9BB5CE")

self.cam_snapshot_img = self.interface.draw_image(x=60, y=5, image="assets/cam_wait.jpg", origin=origin, onclick=lambda:self.interface_config("clear_class"))

self.cam_section = self.interface.fill_round_rect(x=5, y=130, r=10, w=230, h=185, color="#215E7C")

self.cam_run_button = self.interface.fill_round_rect(x=45, y=250, r=5, w=150, h=45, color="#FAE0D8", onclick=self.run_inference)

self.cam_run_text = self.interface.draw_text(x=120, y=272, text="Run Inference", origin="center", color="#5C5B57", font_size=12, onclick=self.run_inference)

self.cam_save_button = self.interface.fill_round_rect(x=45, y=195, r=5, w=150, h=45, color="#FAE0D8", onclick=lambda:self.save_img_sample(self.selected_class))

self.cam_save_text = self.interface.draw_text(x=120, y=217, text="Capture Sample", origin="center", color="#5C5B57", font_size=12, onclick=lambda:self.save_img_sample(self.selected_class))

self.cam_snap_button = self.interface.fill_round_rect(x=45, y=140, r=5, w=150, h=45, color="#FAE0D8", onclick=self.take_snapshot)

self.cam_snap_text = self.interface.draw_text(x=120, y=162, text="Snapshot", origin="center", color="#5C5B57", font_size=12)

self.cam_info_text = self.interface.draw_text(x=120, y=305, text="Pending...", origin="center", color="white", font_size=8)

# Elements and coordinates — Camera.